Quiz

Overview

Litware. Inc. is a manufacturing company that has offices throughout North America. The analytics

team at Litware contains data engineers, analytics engineers, data analysts, and data scientists.

Existing Environment

litware has been using a Microsoft Power Bl tenant for three years. Litware has NOT enabled any

Fabric capacities and features.

Fabric Environment

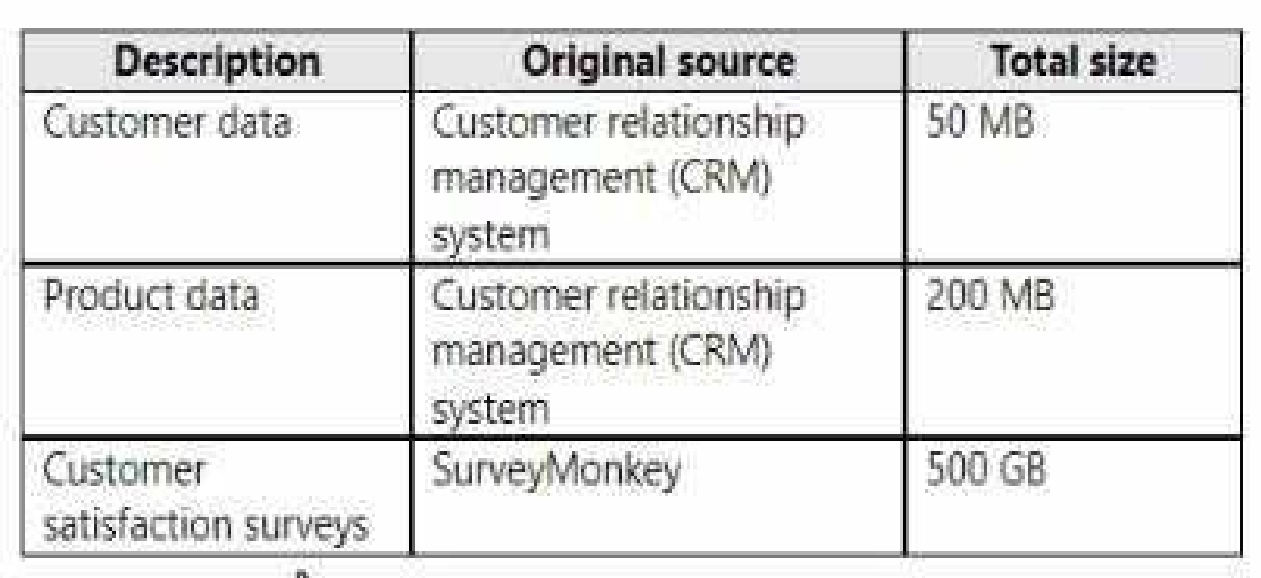

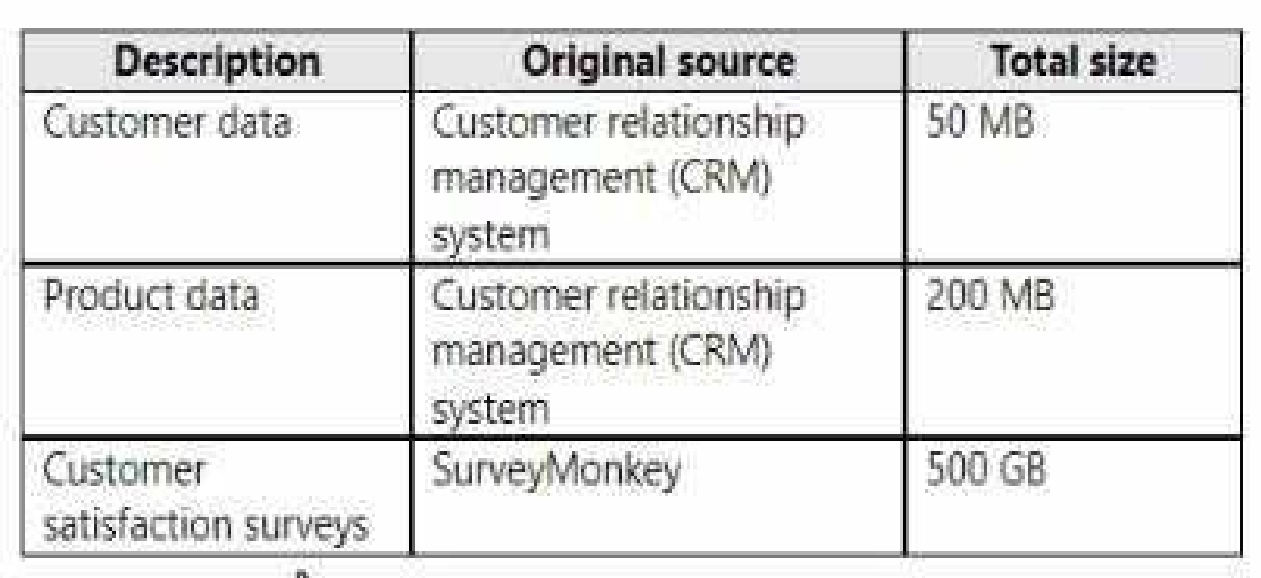

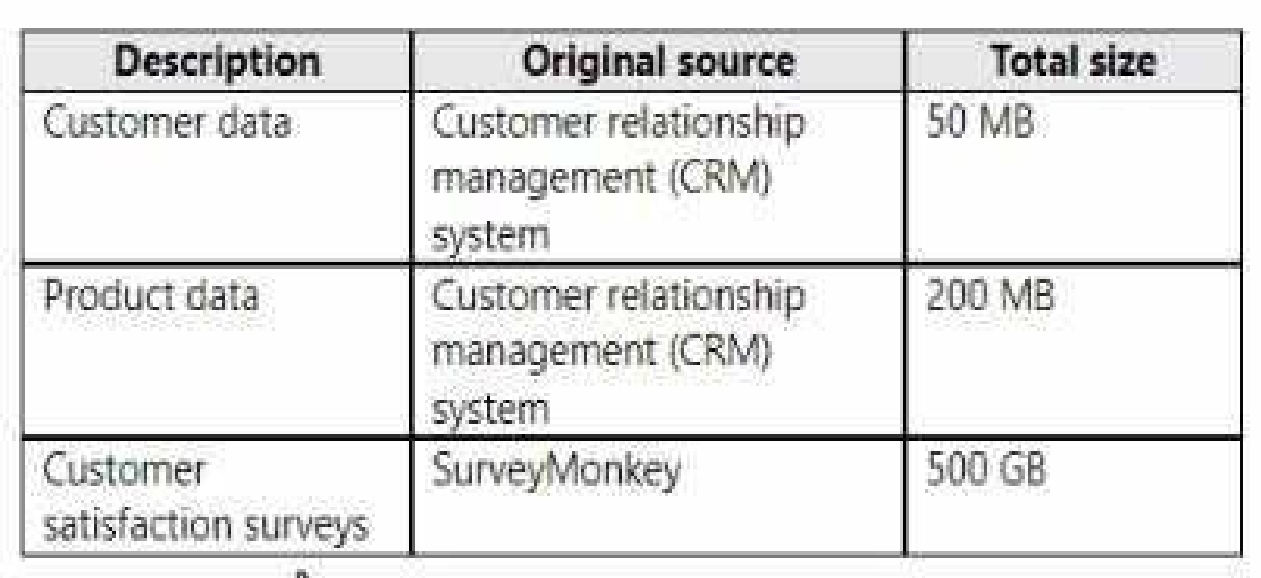

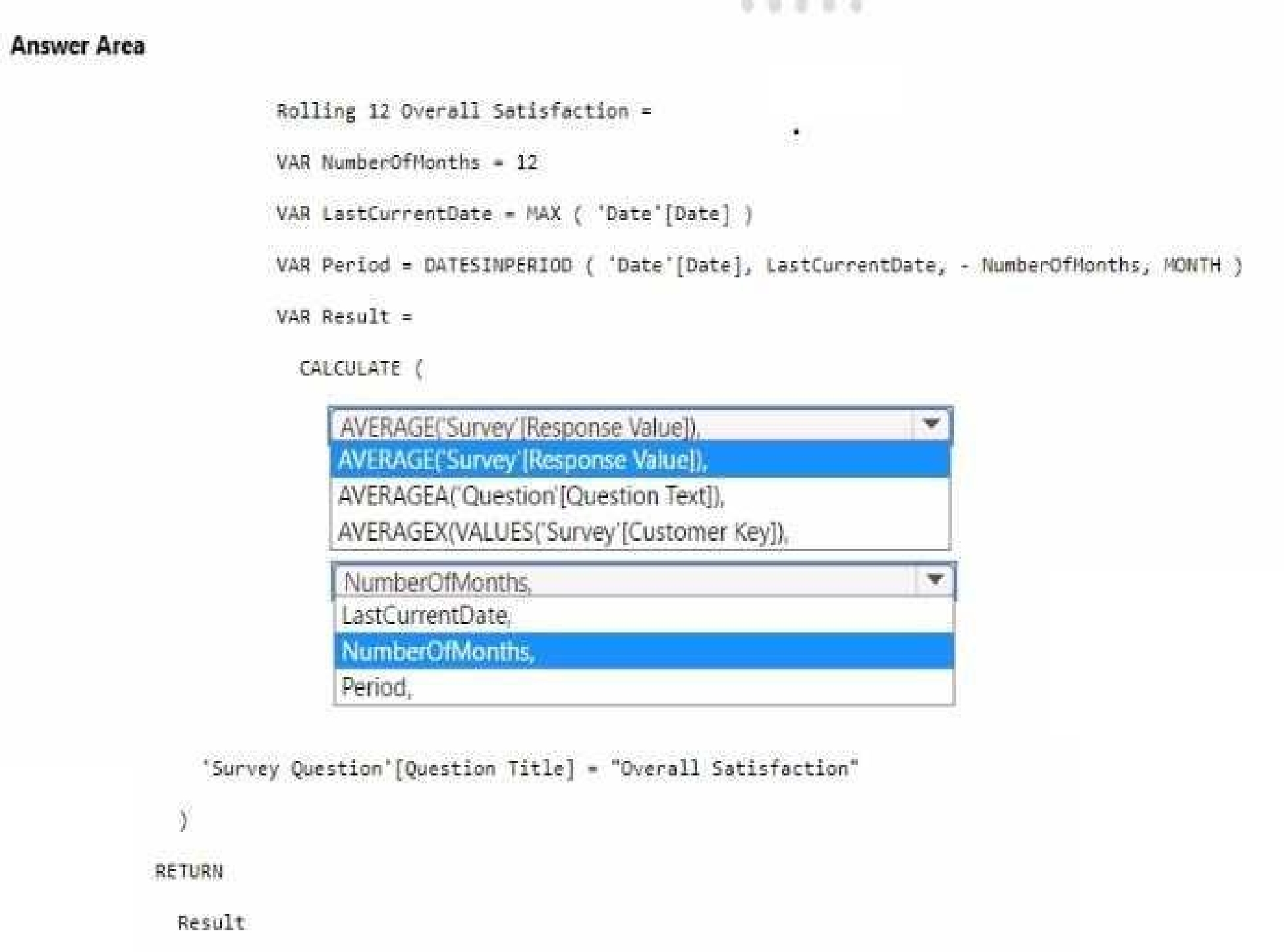

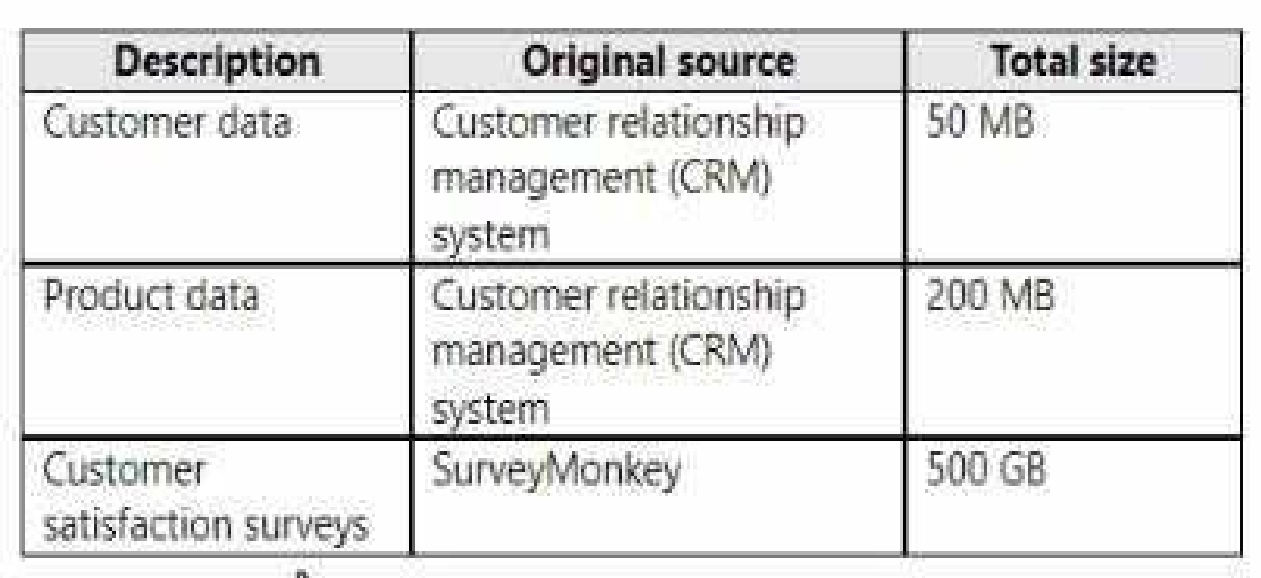

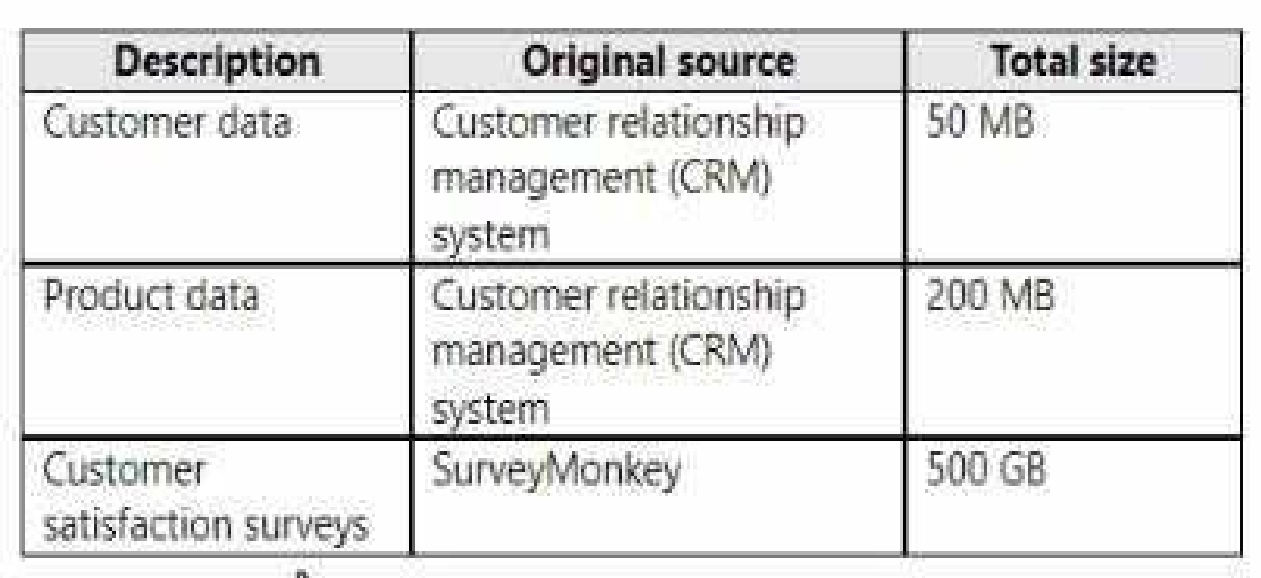

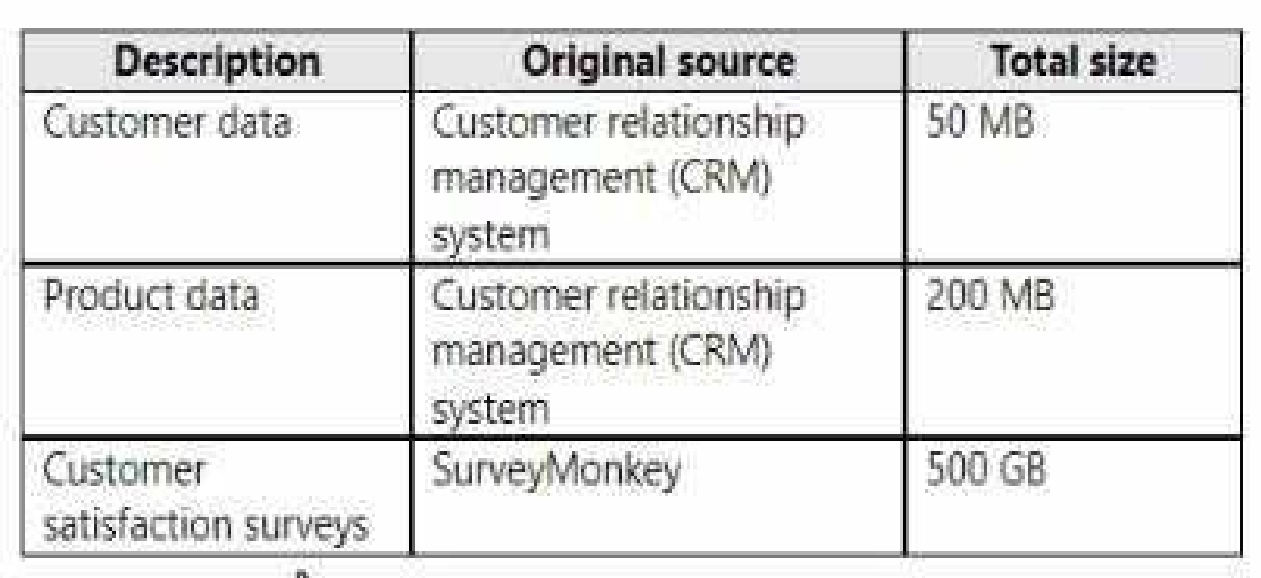

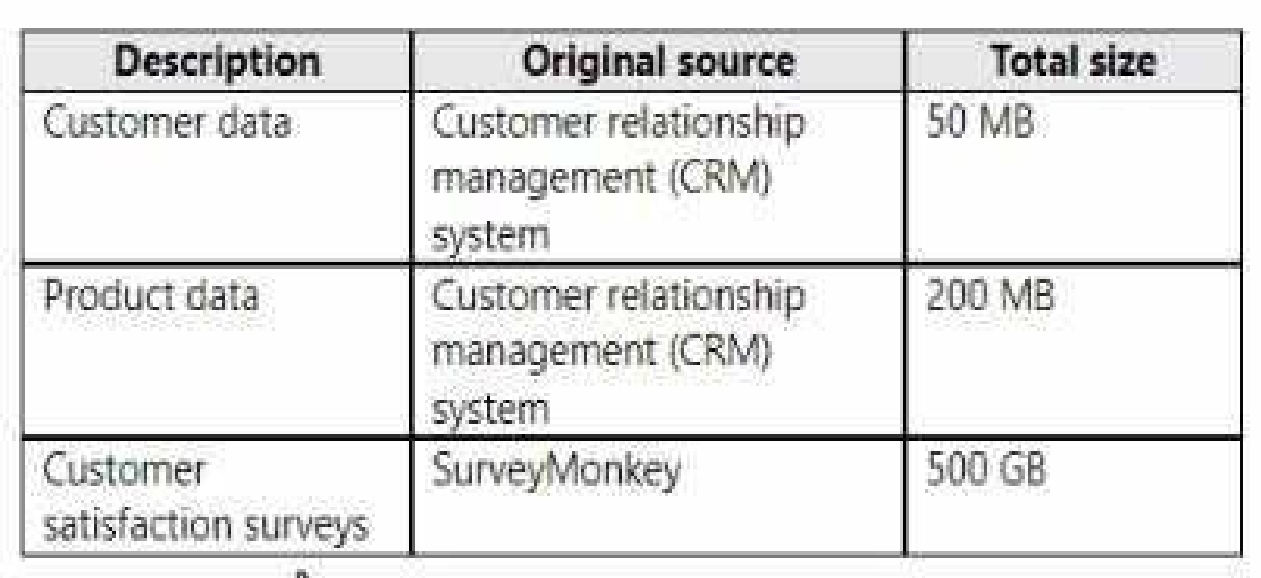

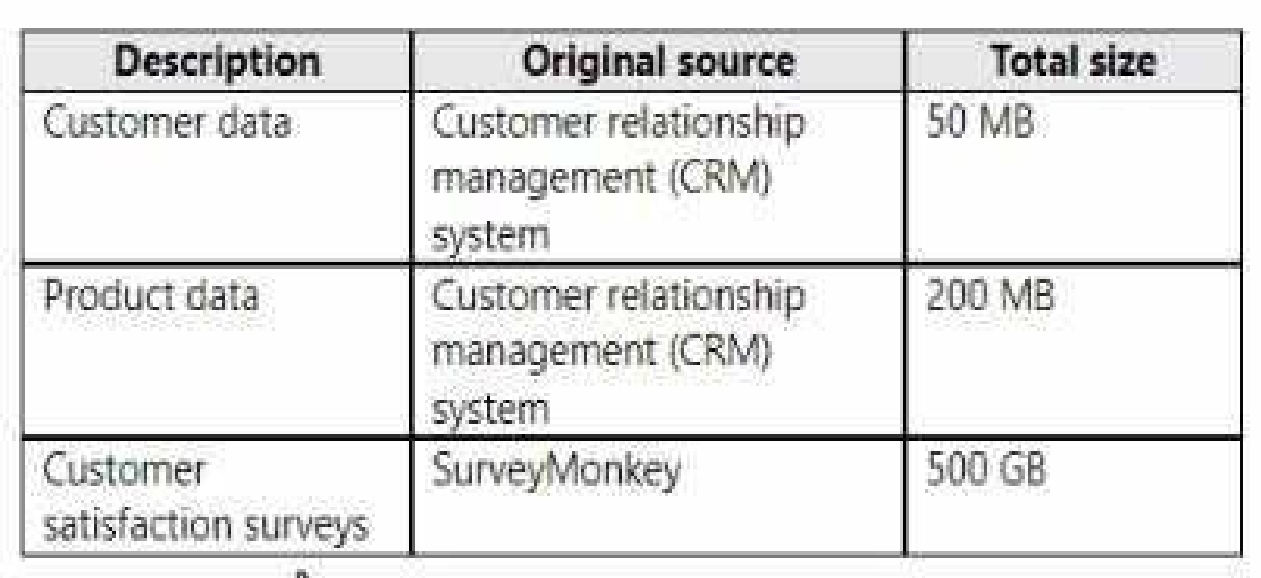

Litware has data that must be analyzed as shown in the following table.

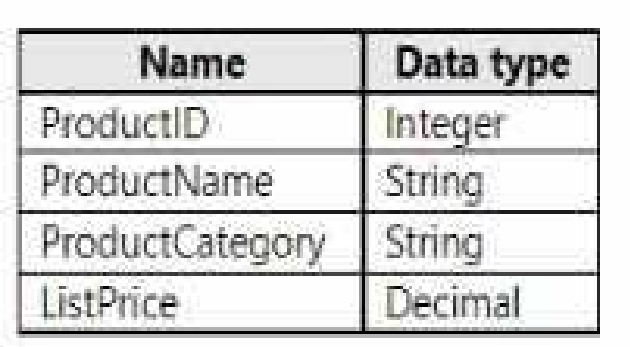

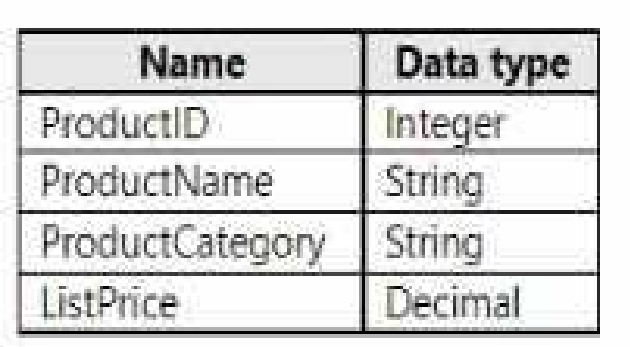

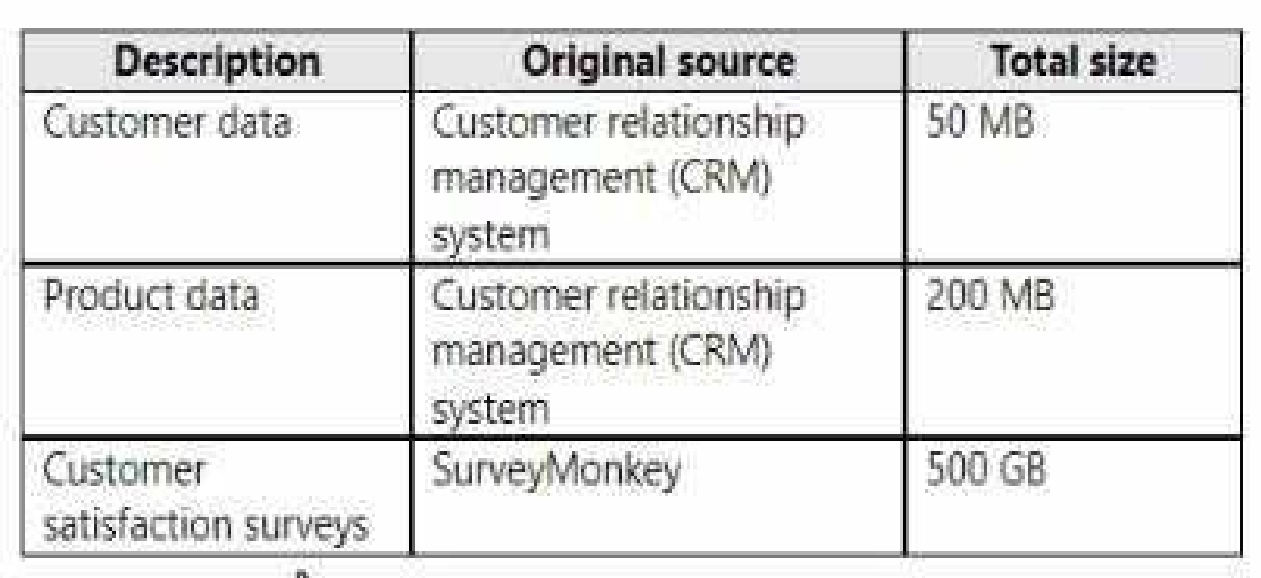

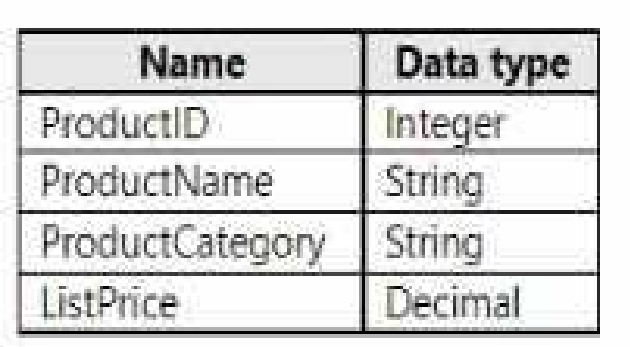

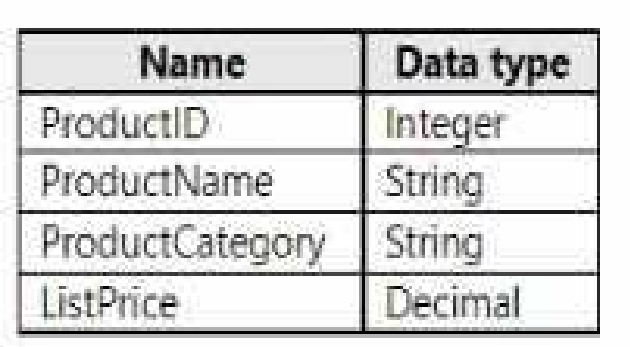

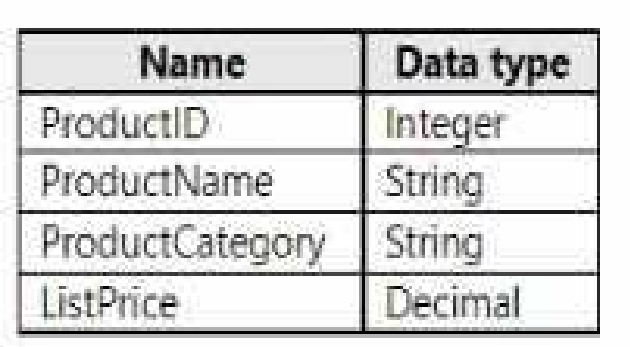

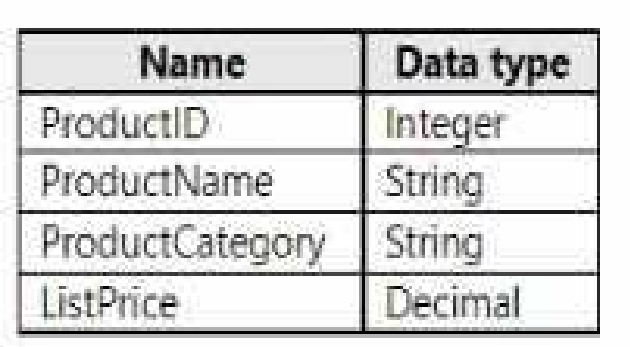

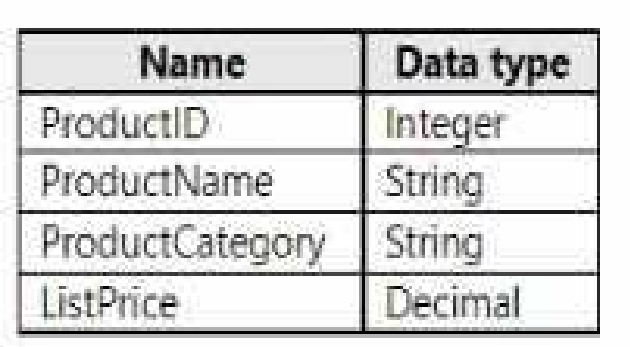

The Product data contains a single table and the following columns.

The customer satisfaction data contains the following tables:

• Survey

• Question

• Response

For each survey submitted, the following occurs:

• One row is added to the Survey table.

• One row is added to the Response table for each question in the survey.

The Question table contains the text of each survey question. The third question in each survey

response is an overall satisfaction score. Customers can submit a survey after each purchase.

User Problems

The analytics team has large volumes of data, some of which is semi-structured. The team wants to

use Fabric to create a new data store.

Product data is often classified into three pricing groups: high, medium, and low. This logic is

implemented in several databases and semantic models, but the logic does NOT always match across

implementations.

Planned Changes

Litware plans to enable Fabric features in the existing tenant. The analytics team will create a new

data store as a proof of concept (PoC). The remaining Litware users will only get access to the Fabric

features once the PoC is complete. The PoC will be completed by using a Fabric trial capacity.

The following three workspaces will be created:

• AnalyticsPOC: Will contain the data store, semantic models, reports, pipelines, dataflows, and

notebooks used to populate the data store

• DataEngPOC: Will contain all the pipelines, dataflows, and notebooks used to populate Onelake

• DataSciPOC: Will contain all the notebooks and reports created by the data scientists

The following will be created in the AnalyticsPOC workspace:

• A data store (type to be decided)

• A custom semantic model

• A default semantic model

• Interactive reports

The data engineers will create data pipelines to load data to OneLake either hourly or daily

depending on the data source. The analytics engineers will create processes to ingest transform, and

load the data to the data store in the AnalyticsPOC workspace daily. Whenever possible, the data

engineers will use low-code tools for data ingestion. The choice of which data cleansing and

transformation tools to use will be at the data engineers' discretion.

All the semantic models and reports in the Analytics POC workspace will use the data store as the

sole data source.

Technical Requirements

The data store must support the following:

• Read access by using T-SQL or Python

• Semi-structured and unstructured data

• Row-level security (RLS) for users executing T-SQL queries

Files loaded by the data engineers to OneLake will be stored in the Parquet format and will meet

Delta Lake specifications.

Data will be loaded without transformation in one area of the AnalyticsPOC data store. The data will

then be cleansed, merged, and transformed into a dimensional model.

The data load process must ensure that the raw and cleansed data is updated completely before

populating the dimensional model.

The dimensional model must contain a date dimension. There is no existing data source for the date

dimension. The Litware fiscal year matches the calendar year. The date dimension must always

contain dates from 2010 through the end of the current year.

The product pricing group logic must be maintained by the analytics engineers in a single location.

The pricing group data must be made available in the data store for T-SQL queries and in the default

semantic model. The following logic must be used:

• List prices that are less than or equal to 50 are in the low pricing group.

• List prices that are greater than 50 and less than or equal to 1,000 are in the medium pricing

group.

• List pnces that are greater than 1,000 are in the high pricing group.

Security Requirements

Only Fabric administrators and the analytics team must be able to see the Fabric items created as

part of the PoC. Litware identifies the following security requirements for the Fabric items in the

AnalyticsPOC workspace:

• Fabric administrators will be the workspace administrators.

• The data engineers must be able to read from and write to the data store. No access must be

granted to datasets or reports.

• The analytics engineers must be able to read from, write to, and create schemas in the data store.

They also must be able to create and share semantic models with the data analysts and view and

modify all reports in the workspace.

• The data scientists must be able to read from the data store, but not write to it. They will access

the data by using a Spark notebook.

• The data analysts must have read access to only the dimensional model objects in the data store.

They also must have access to create Power Bl reports by using the semantic models created by the

analytics engineers.

• The date dimension must be available to all users of the data store.

• The principle of least privilege must be followed.

Both the default and custom semantic models must include only tables or views from the

dimensional model in the data store. Litware already has the following Microsoft Entra security

groups:

• FabricAdmins: Fabric administrators

• AnalyticsTeam: All the members of the analytics team

• DataAnalysts: The data analysts on the analytics team

• DataScientists: The data scientists on the analytics team

• Data Engineers: The data engineers on the analytics team

• Analytics Engineers: The analytics engineers on the analytics team

Report Requirements

The data analysis must create a customer satisfaction report that meets the following requirements:

• Enables a user to select a product to filter customer survey responses to only those who have

purchased that product

• Displays the average overall satisfaction score of all the surveys submitted during the last 12

months up to a selected date

• Shows data as soon as the data is updated in the data store

• Ensures that the report and the semantic model only contain data from the current and previous

year

• Ensures that the report respects any table-level security specified in the source data store

• Minimizes the execution time of report queries

AnatyticsPOC workspace?

Quiz

Overview

Litware. Inc. is a manufacturing company that has offices throughout North America. The analytics

team at Litware contains data engineers, analytics engineers, data analysts, and data scientists.

Existing Environment

litware has been using a Microsoft Power Bl tenant for three years. Litware has NOT enabled any

Fabric capacities and features.

Fabric Environment

Litware has data that must be analyzed as shown in the following table.

The Product data contains a single table and the following columns.

The customer satisfaction data contains the following tables:

• Survey

• Question

• Response

For each survey submitted, the following occurs:

• One row is added to the Survey table.

• One row is added to the Response table for each question in the survey.

The Question table contains the text of each survey question. The third question in each survey

response is an overall satisfaction score. Customers can submit a survey after each purchase.

User Problems

The analytics team has large volumes of data, some of which is semi-structured. The team wants to

use Fabric to create a new data store.

Product data is often classified into three pricing groups: high, medium, and low. This logic is

implemented in several databases and semantic models, but the logic does NOT always match across

implementations.

Planned Changes

Litware plans to enable Fabric features in the existing tenant. The analytics team will create a new

data store as a proof of concept (PoC). The remaining Litware users will only get access to the Fabric

features once the PoC is complete. The PoC will be completed by using a Fabric trial capacity.

The following three workspaces will be created:

• AnalyticsPOC: Will contain the data store, semantic models, reports, pipelines, dataflows, and

notebooks used to populate the data store

• DataEngPOC: Will contain all the pipelines, dataflows, and notebooks used to populate Onelake

• DataSciPOC: Will contain all the notebooks and reports created by the data scientists

The following will be created in the AnalyticsPOC workspace:

• A data store (type to be decided)

• A custom semantic model

• A default semantic model

• Interactive reports

The data engineers will create data pipelines to load data to OneLake either hourly or daily

depending on the data source. The analytics engineers will create processes to ingest transform, and

load the data to the data store in the AnalyticsPOC workspace daily. Whenever possible, the data

engineers will use low-code tools for data ingestion. The choice of which data cleansing and

transformation tools to use will be at the data engineers' discretion.

All the semantic models and reports in the Analytics POC workspace will use the data store as the

sole data source.

Technical Requirements

The data store must support the following:

• Read access by using T-SQL or Python

• Semi-structured and unstructured data

• Row-level security (RLS) for users executing T-SQL queries

Files loaded by the data engineers to OneLake will be stored in the Parquet format and will meet

Delta Lake specifications.

Data will be loaded without transformation in one area of the AnalyticsPOC data store. The data will

then be cleansed, merged, and transformed into a dimensional model.

The data load process must ensure that the raw and cleansed data is updated completely before

populating the dimensional model.

The dimensional model must contain a date dimension. There is no existing data source for the date

dimension. The Litware fiscal year matches the calendar year. The date dimension must always

contain dates from 2010 through the end of the current year.

The product pricing group logic must be maintained by the analytics engineers in a single location.

The pricing group data must be made available in the data store for T-SQL queries and in the default

semantic model. The following logic must be used:

• List prices that are less than or equal to 50 are in the low pricing group.

• List prices that are greater than 50 and less than or equal to 1,000 are in the medium pricing

group.

• List pnces that are greater than 1,000 are in the high pricing group.

Security Requirements

Only Fabric administrators and the analytics team must be able to see the Fabric items created as

part of the PoC. Litware identifies the following security requirements for the Fabric items in the

AnalyticsPOC workspace:

• Fabric administrators will be the workspace administrators.

• The data engineers must be able to read from and write to the data store. No access must be

granted to datasets or reports.

• The analytics engineers must be able to read from, write to, and create schemas in the data store.

They also must be able to create and share semantic models with the data analysts and view and

modify all reports in the workspace.

• The data scientists must be able to read from the data store, but not write to it. They will access

the data by using a Spark notebook.

• The data analysts must have read access to only the dimensional model objects in the data store.

They also must have access to create Power Bl reports by using the semantic models created by the

analytics engineers.

• The date dimension must be available to all users of the data store.

• The principle of least privilege must be followed.

Both the default and custom semantic models must include only tables or views from the

dimensional model in the data store. Litware already has the following Microsoft Entra security

groups:

• FabricAdmins: Fabric administrators

• AnalyticsTeam: All the members of the analytics team

• DataAnalysts: The data analysts on the analytics team

• DataScientists: The data scientists on the analytics team

• Data Engineers: The data engineers on the analytics team

• Analytics Engineers: The analytics engineers on the analytics team

Report Requirements

The data analysis must create a customer satisfaction report that meets the following requirements:

• Enables a user to select a product to filter customer survey responses to only those who have

purchased that product

• Displays the average overall satisfaction score of all the surveys submitted during the last 12

months up to a selected date

• Shows data as soon as the data is updated in the data store

• Ensures that the report and the semantic model only contain data from the current and previous

year

• Ensures that the report respects any table-level security specified in the source data store

• Minimizes the execution time of report queries

meet the security requirements.

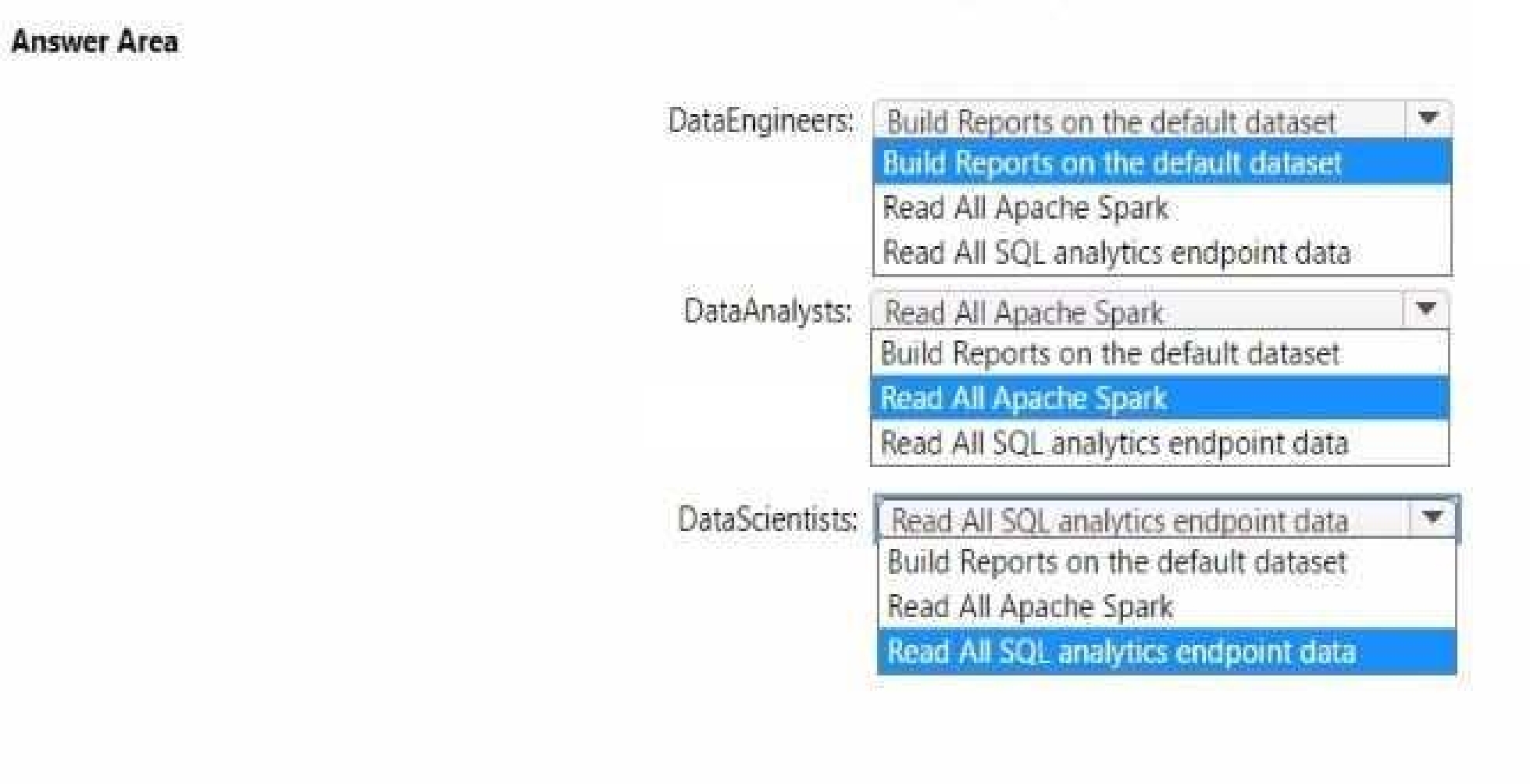

Which additional permissions should you assign when you share the data store? To answer, select the

appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Data Analysts: Read All Apache Spark

Data Scientists: Read All SQL analytics endpoint data

The permissions for the data store in the AnalyticsPOC workspace should align with the principle of

least privilege:

Data Engineers need read and write access but not to datasets or reports.

Data Analysts require read access specifically to the dimensional model objects and the ability to

create Power BI reports.

Data Scientists need read access via Spark notebooks. These settings ensure each role has the

necessary permissions to fulfill their responsibilities without exceeding their required access level.

Quiz

Overview

Litware. Inc. is a manufacturing company that has offices throughout North America. The analytics

team at Litware contains data engineers, analytics engineers, data analysts, and data scientists.

Existing Environment

litware has been using a Microsoft Power Bl tenant for three years. Litware has NOT enabled any

Fabric capacities and features.

Fabric Environment

Litware has data that must be analyzed as shown in the following table.

The Product data contains a single table and the following columns.

The customer satisfaction data contains the following tables:

• Survey

• Question

• Response

For each survey submitted, the following occurs:

• One row is added to the Survey table.

• One row is added to the Response table for each question in the survey.

The Question table contains the text of each survey question. The third question in each survey

response is an overall satisfaction score. Customers can submit a survey after each purchase.

User Problems

The analytics team has large volumes of data, some of which is semi-structured. The team wants to

use Fabric to create a new data store.

Product data is often classified into three pricing groups: high, medium, and low. This logic is

implemented in several databases and semantic models, but the logic does NOT always match across

implementations.

Planned Changes

Litware plans to enable Fabric features in the existing tenant. The analytics team will create a new

data store as a proof of concept (PoC). The remaining Litware users will only get access to the Fabric

features once the PoC is complete. The PoC will be completed by using a Fabric trial capacity.

The following three workspaces will be created:

• AnalyticsPOC: Will contain the data store, semantic models, reports, pipelines, dataflows, and

notebooks used to populate the data store

• DataEngPOC: Will contain all the pipelines, dataflows, and notebooks used to populate Onelake

• DataSciPOC: Will contain all the notebooks and reports created by the data scientists

The following will be created in the AnalyticsPOC workspace:

• A data store (type to be decided)

• A custom semantic model

• A default semantic model

• Interactive reports

The data engineers will create data pipelines to load data to OneLake either hourly or daily

depending on the data source. The analytics engineers will create processes to ingest transform, and

load the data to the data store in the AnalyticsPOC workspace daily. Whenever possible, the data

engineers will use low-code tools for data ingestion. The choice of which data cleansing and

transformation tools to use will be at the data engineers' discretion.

All the semantic models and reports in the Analytics POC workspace will use the data store as the

sole data source.

Technical Requirements

The data store must support the following:

• Read access by using T-SQL or Python

• Semi-structured and unstructured data

• Row-level security (RLS) for users executing T-SQL queries

Files loaded by the data engineers to OneLake will be stored in the Parquet format and will meet

Delta Lake specifications.

Data will be loaded without transformation in one area of the AnalyticsPOC data store. The data will

then be cleansed, merged, and transformed into a dimensional model.

The data load process must ensure that the raw and cleansed data is updated completely before

populating the dimensional model.

The dimensional model must contain a date dimension. There is no existing data source for the date

dimension. The Litware fiscal year matches the calendar year. The date dimension must always

contain dates from 2010 through the end of the current year.

The product pricing group logic must be maintained by the analytics engineers in a single location.

The pricing group data must be made available in the data store for T-SQL queries and in the default

semantic model. The following logic must be used:

• List prices that are less than or equal to 50 are in the low pricing group.

• List prices that are greater than 50 and less than or equal to 1,000 are in the medium pricing

group.

• List pnces that are greater than 1,000 are in the high pricing group.

Security Requirements

Only Fabric administrators and the analytics team must be able to see the Fabric items created as

part of the PoC. Litware identifies the following security requirements for the Fabric items in the

AnalyticsPOC workspace:

• Fabric administrators will be the workspace administrators.

• The data engineers must be able to read from and write to the data store. No access must be

granted to datasets or reports.

• The analytics engineers must be able to read from, write to, and create schemas in the data store.

They also must be able to create and share semantic models with the data analysts and view and

modify all reports in the workspace.

• The data scientists must be able to read from the data store, but not write to it. They will access

the data by using a Spark notebook.

• The data analysts must have read access to only the dimensional model objects in the data store.

They also must have access to create Power Bl reports by using the semantic models created by the

analytics engineers.

• The date dimension must be available to all users of the data store.

• The principle of least privilege must be followed.

Both the default and custom semantic models must include only tables or views from the

dimensional model in the data store. Litware already has the following Microsoft Entra security

groups:

• FabricAdmins: Fabric administrators

• AnalyticsTeam: All the members of the analytics team

• DataAnalysts: The data analysts on the analytics team

• DataScientists: The data scientists on the analytics team

• Data Engineers: The data engineers on the analytics team

• Analytics Engineers: The analytics engineers on the analytics team

Report Requirements

The data analysis must create a customer satisfaction report that meets the following requirements:

• Enables a user to select a product to filter customer survey responses to only those who have

purchased that product

• Displays the average overall satisfaction score of all the surveys submitted during the last 12

months up to a selected date

• Shows data as soon as the data is updated in the data store

• Ensures that the report and the semantic model only contain data from the current and previous

year

• Ensures that the report respects any table-level security specified in the source data store

• Minimizes the execution time of report queries

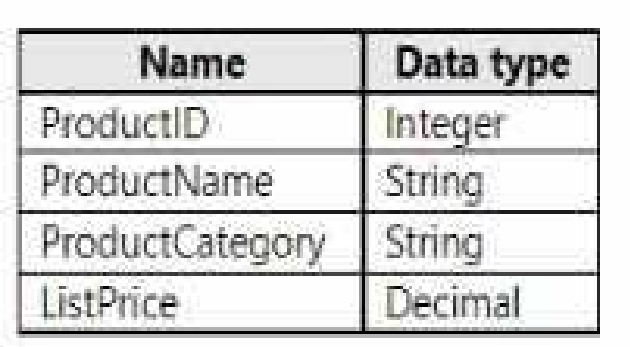

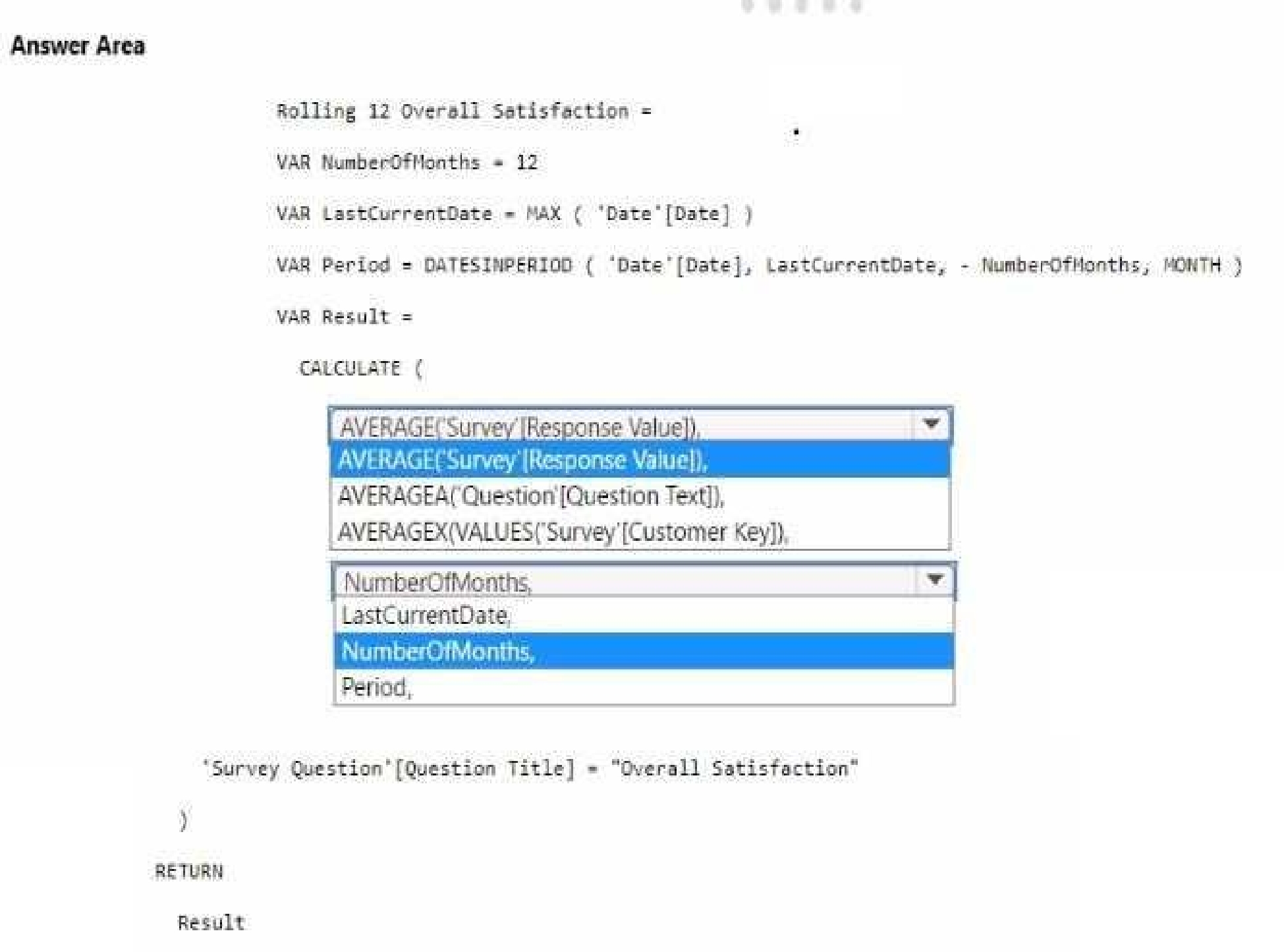

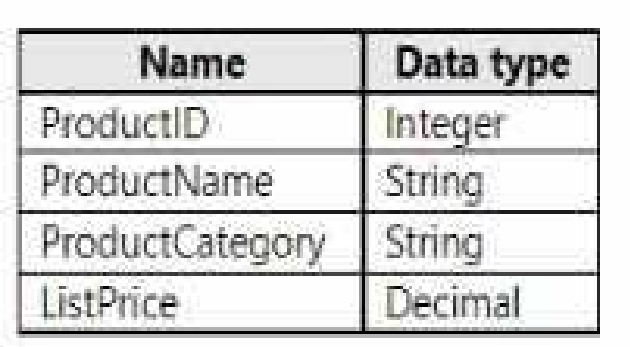

How should you complete the DAX code? To answer, select the appropriate options in the answer

area.

NOTE: Each correct selection is worth one point.

It should reference the Response Value column from the 'Survey' table.

The 'Number of months' should be used to define the period for the average calculation.

To calculate the average overall satisfaction score using DAX, you would need to use the AVERAGE

function on the response values related to satisfaction questions. The DATESINPERIOD function will

help in calculating the rolling average over the last 12 months.

Quiz

Overview

Litware. Inc. is a manufacturing company that has offices throughout North America. The analytics

team at Litware contains data engineers, analytics engineers, data analysts, and data scientists.

Existing Environment

litware has been using a Microsoft Power Bl tenant for three years. Litware has NOT enabled any

Fabric capacities and features.

Fabric Environment

Litware has data that must be analyzed as shown in the following table.

The Product data contains a single table and the following columns.

The customer satisfaction data contains the following tables:

• Survey

• Question

• Response

For each survey submitted, the following occurs:

• One row is added to the Survey table.

• One row is added to the Response table for each question in the survey.

The Question table contains the text of each survey question. The third question in each survey

response is an overall satisfaction score. Customers can submit a survey after each purchase.

User Problems

The analytics team has large volumes of data, some of which is semi-structured. The team wants to

use Fabric to create a new data store.

Product data is often classified into three pricing groups: high, medium, and low. This logic is

implemented in several databases and semantic models, but the logic does NOT always match across

implementations.

Planned Changes

Litware plans to enable Fabric features in the existing tenant. The analytics team will create a new

data store as a proof of concept (PoC). The remaining Litware users will only get access to the Fabric

features once the PoC is complete. The PoC will be completed by using a Fabric trial capacity.

The following three workspaces will be created:

• AnalyticsPOC: Will contain the data store, semantic models, reports, pipelines, dataflows, and

notebooks used to populate the data store

• DataEngPOC: Will contain all the pipelines, dataflows, and notebooks used to populate Onelake

• DataSciPOC: Will contain all the notebooks and reports created by the data scientists

The following will be created in the AnalyticsPOC workspace:

• A data store (type to be decided)

• A custom semantic model

• A default semantic model

• Interactive reports

The data engineers will create data pipelines to load data to OneLake either hourly or daily

depending on the data source. The analytics engineers will create processes to ingest transform, and

load the data to the data store in the AnalyticsPOC workspace daily. Whenever possible, the data

engineers will use low-code tools for data ingestion. The choice of which data cleansing and

transformation tools to use will be at the data engineers' discretion.

All the semantic models and reports in the Analytics POC workspace will use the data store as the

sole data source.

Technical Requirements

The data store must support the following:

• Read access by using T-SQL or Python

• Semi-structured and unstructured data

• Row-level security (RLS) for users executing T-SQL queries

Files loaded by the data engineers to OneLake will be stored in the Parquet format and will meet

Delta Lake specifications.

Data will be loaded without transformation in one area of the AnalyticsPOC data store. The data will

then be cleansed, merged, and transformed into a dimensional model.

The data load process must ensure that the raw and cleansed data is updated completely before

populating the dimensional model.

The dimensional model must contain a date dimension. There is no existing data source for the date

dimension. The Litware fiscal year matches the calendar year. The date dimension must always

contain dates from 2010 through the end of the current year.

The product pricing group logic must be maintained by the analytics engineers in a single location.

The pricing group data must be made available in the data store for T-SQL queries and in the default

semantic model. The following logic must be used:

• List prices that are less than or equal to 50 are in the low pricing group.

• List prices that are greater than 50 and less than or equal to 1,000 are in the medium pricing

group.

• List pnces that are greater than 1,000 are in the high pricing group.

Security Requirements

Only Fabric administrators and the analytics team must be able to see the Fabric items created as

part of the PoC. Litware identifies the following security requirements for the Fabric items in the

AnalyticsPOC workspace:

• Fabric administrators will be the workspace administrators.

• The data engineers must be able to read from and write to the data store. No access must be

granted to datasets or reports.

• The analytics engineers must be able to read from, write to, and create schemas in the data store.

They also must be able to create and share semantic models with the data analysts and view and

modify all reports in the workspace.

• The data scientists must be able to read from the data store, but not write to it. They will access

the data by using a Spark notebook.

• The data analysts must have read access to only the dimensional model objects in the data store.

They also must have access to create Power Bl reports by using the semantic models created by the

analytics engineers.

• The date dimension must be available to all users of the data store.

• The principle of least privilege must be followed.

Both the default and custom semantic models must include only tables or views from the

dimensional model in the data store. Litware already has the following Microsoft Entra security

groups:

• FabricAdmins: Fabric administrators

• AnalyticsTeam: All the members of the analytics team

• DataAnalysts: The data analysts on the analytics team

• DataScientists: The data scientists on the analytics team

• Data Engineers: The data engineers on the analytics team

• Analytics Engineers: The analytics engineers on the analytics team

Report Requirements

The data analysis must create a customer satisfaction report that meets the following requirements:

• Enables a user to select a product to filter customer survey responses to only those who have

purchased that product

• Displays the average overall satisfaction score of all the surveys submitted during the last 12

months up to a selected date

• Shows data as soon as the data is updated in the data store

• Ensures that the report and the semantic model only contain data from the current and previous

year

• Ensures that the report respects any table-level security specified in the source data store

• Minimizes the execution time of report queries

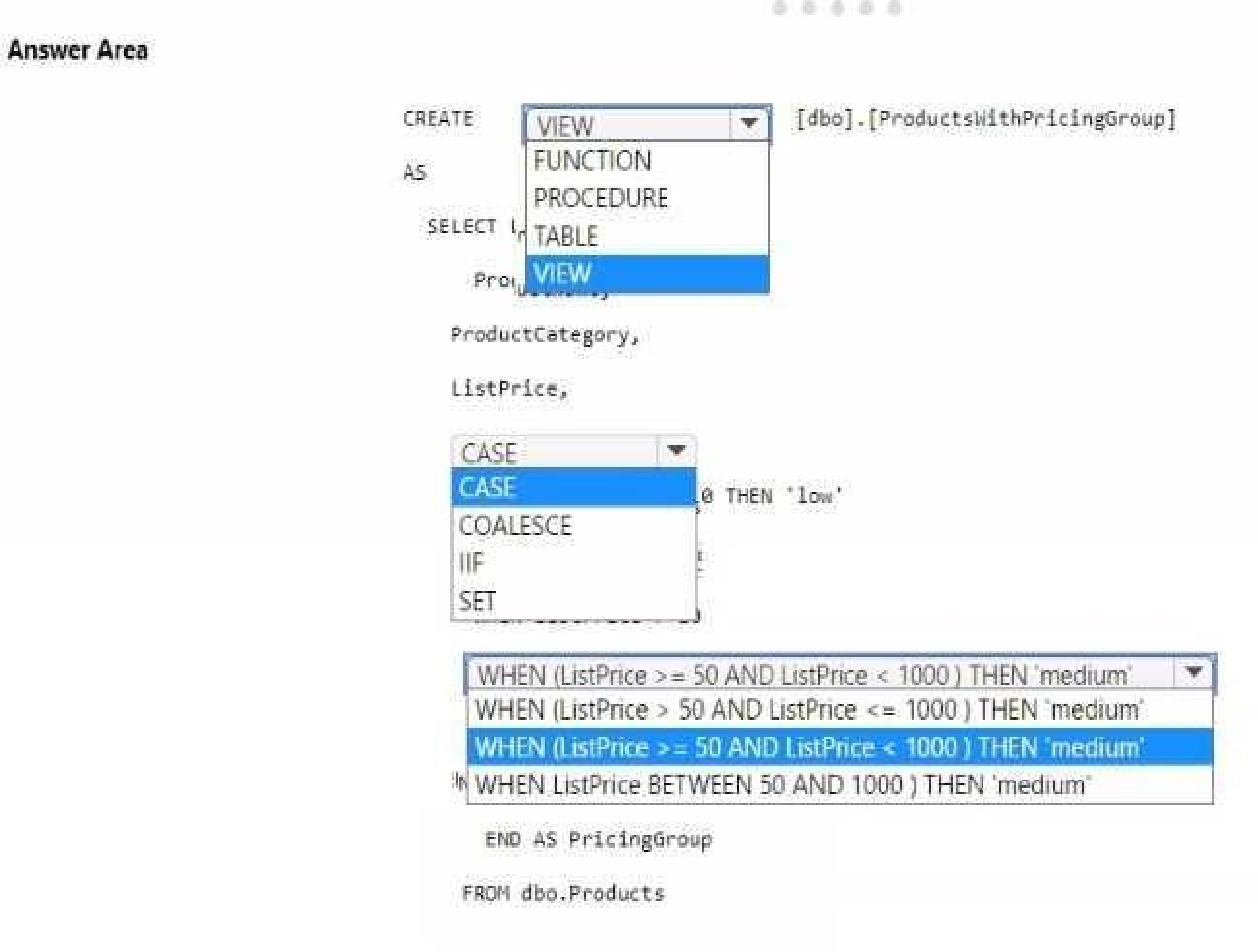

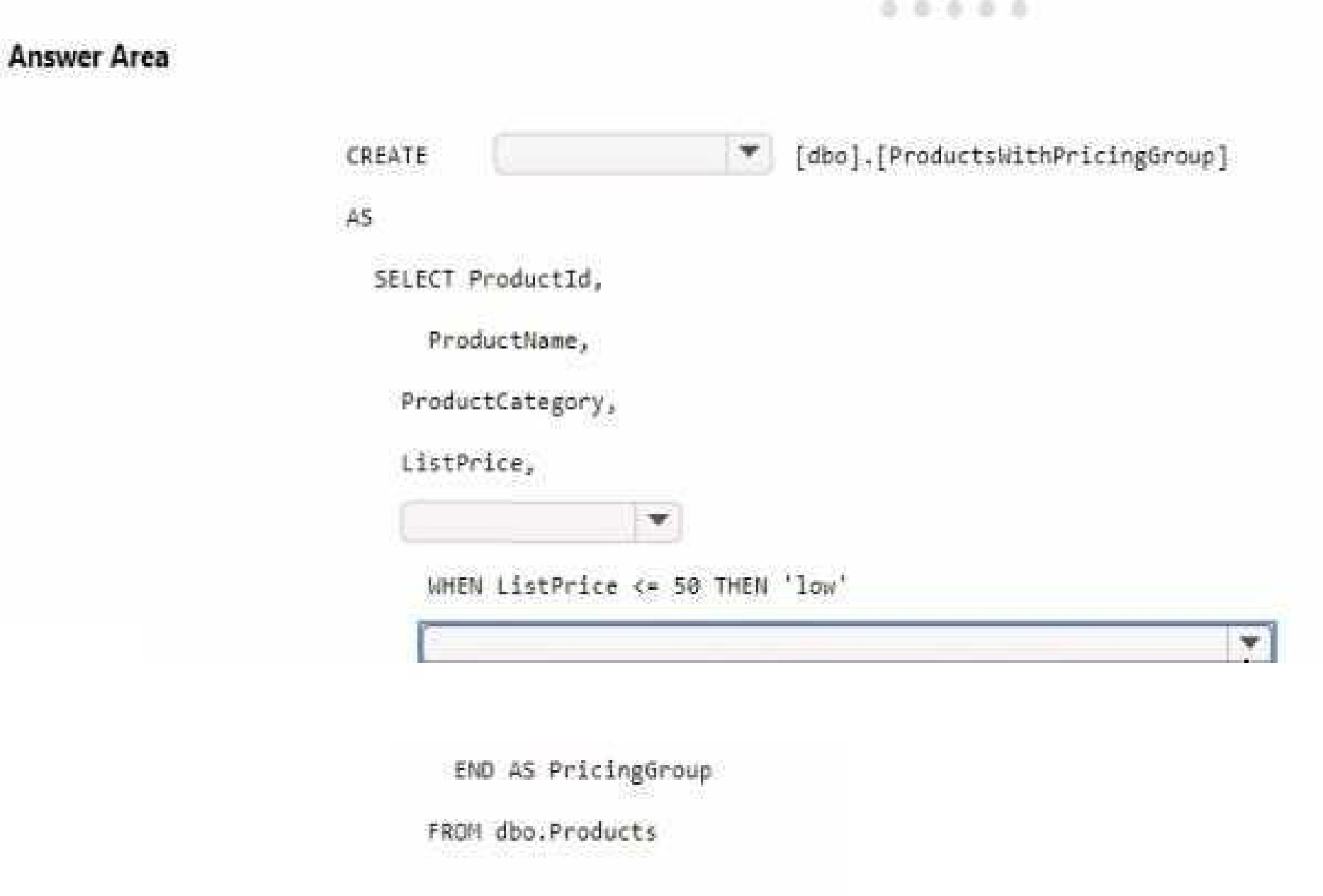

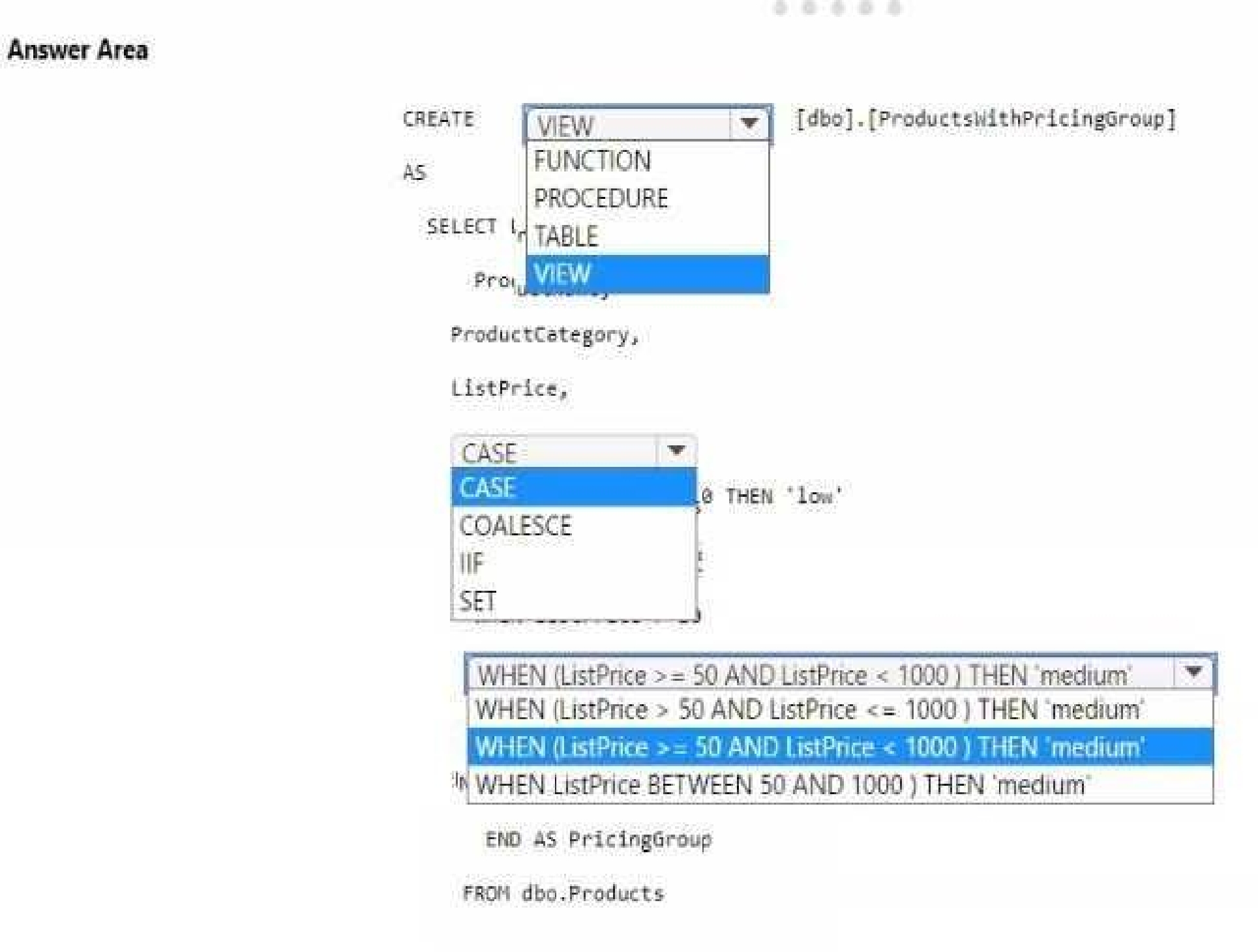

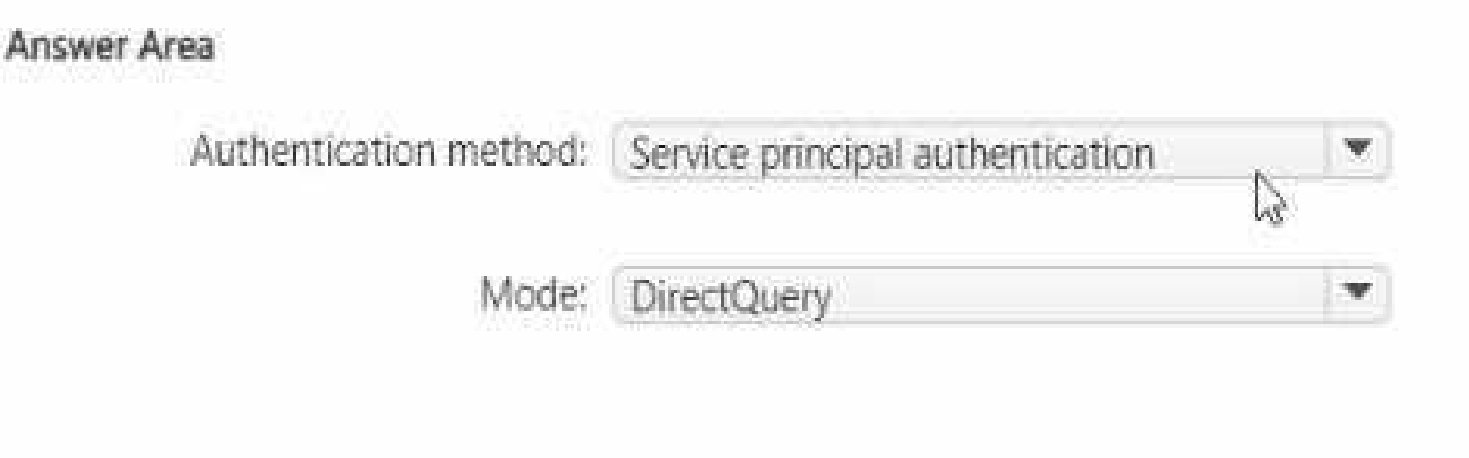

How should you complete the T-SQL statement? To answer, select the appropriate options in the

answer area.

NOTE: Each correct selection is worth one point.

You should use CREATE VIEW to make the pricing group logic available for T-SQL queries.

The CASE statement should be used to determine the pricing group based on the list price.

The T-SQL statement should create a view that classifies products into pricing groups based on the

list price. The CASE statement is the correct conditional logic to assign each product to the

appropriate pricing group. This view will standardize the pricing group logic across different

databases and semantic models.

Quiz

Overview

Litware. Inc. is a manufacturing company that has offices throughout North America. The analytics

team at Litware contains data engineers, analytics engineers, data analysts, and data scientists.

Existing Environment

litware has been using a Microsoft Power Bl tenant for three years. Litware has NOT enabled any

Fabric capacities and features.

Fabric Environment

Litware has data that must be analyzed as shown in the following table.

The Product data contains a single table and the following columns.

The customer satisfaction data contains the following tables:

• Survey

• Question

• Response

For each survey submitted, the following occurs:

• One row is added to the Survey table.

• One row is added to the Response table for each question in the survey.

The Question table contains the text of each survey question. The third question in each survey

response is an overall satisfaction score. Customers can submit a survey after each purchase.

User Problems

The analytics team has large volumes of data, some of which is semi-structured. The team wants to

use Fabric to create a new data store.

Product data is often classified into three pricing groups: high, medium, and low. This logic is

implemented in several databases and semantic models, but the logic does NOT always match across

implementations.

Planned Changes

Litware plans to enable Fabric features in the existing tenant. The analytics team will create a new

data store as a proof of concept (PoC). The remaining Litware users will only get access to the Fabric

features once the PoC is complete. The PoC will be completed by using a Fabric trial capacity.

The following three workspaces will be created:

• AnalyticsPOC: Will contain the data store, semantic models, reports, pipelines, dataflows, and

notebooks used to populate the data store

• DataEngPOC: Will contain all the pipelines, dataflows, and notebooks used to populate Onelake

• DataSciPOC: Will contain all the notebooks and reports created by the data scientists

The following will be created in the AnalyticsPOC workspace:

• A data store (type to be decided)

• A custom semantic model

• A default semantic model

• Interactive reports

The data engineers will create data pipelines to load data to OneLake either hourly or daily

depending on the data source. The analytics engineers will create processes to ingest transform, and

load the data to the data store in the AnalyticsPOC workspace daily. Whenever possible, the data

engineers will use low-code tools for data ingestion. The choice of which data cleansing and

transformation tools to use will be at the data engineers' discretion.

All the semantic models and reports in the Analytics POC workspace will use the data store as the

sole data source.

Technical Requirements

The data store must support the following:

• Read access by using T-SQL or Python

• Semi-structured and unstructured data

• Row-level security (RLS) for users executing T-SQL queries

Files loaded by the data engineers to OneLake will be stored in the Parquet format and will meet

Delta Lake specifications.

Data will be loaded without transformation in one area of the AnalyticsPOC data store. The data will

then be cleansed, merged, and transformed into a dimensional model.

The data load process must ensure that the raw and cleansed data is updated completely before

populating the dimensional model.

The dimensional model must contain a date dimension. There is no existing data source for the date

dimension. The Litware fiscal year matches the calendar year. The date dimension must always

contain dates from 2010 through the end of the current year.

The product pricing group logic must be maintained by the analytics engineers in a single location.

The pricing group data must be made available in the data store for T-SQL queries and in the default

semantic model. The following logic must be used:

• List prices that are less than or equal to 50 are in the low pricing group.

• List prices that are greater than 50 and less than or equal to 1,000 are in the medium pricing

group.

• List pnces that are greater than 1,000 are in the high pricing group.

Security Requirements

Only Fabric administrators and the analytics team must be able to see the Fabric items created as

part of the PoC. Litware identifies the following security requirements for the Fabric items in the

AnalyticsPOC workspace:

• Fabric administrators will be the workspace administrators.

• The data engineers must be able to read from and write to the data store. No access must be

granted to datasets or reports.

• The analytics engineers must be able to read from, write to, and create schemas in the data store.

They also must be able to create and share semantic models with the data analysts and view and

modify all reports in the workspace.

• The data scientists must be able to read from the data store, but not write to it. They will access

the data by using a Spark notebook.

• The data analysts must have read access to only the dimensional model objects in the data store.

They also must have access to create Power Bl reports by using the semantic models created by the

analytics engineers.

• The date dimension must be available to all users of the data store.

• The principle of least privilege must be followed.

Both the default and custom semantic models must include only tables or views from the

dimensional model in the data store. Litware already has the following Microsoft Entra security

groups:

• FabricAdmins: Fabric administrators

• AnalyticsTeam: All the members of the analytics team

• DataAnalysts: The data analysts on the analytics team

• DataScientists: The data scientists on the analytics team

• Data Engineers: The data engineers on the analytics team

• Analytics Engineers: The analytics engineers on the analytics team

Report Requirements

The data analysis must create a customer satisfaction report that meets the following requirements:

• Enables a user to select a product to filter customer survey responses to only those who have

purchased that product

• Displays the average overall satisfaction score of all the surveys submitted during the last 12

months up to a selected date

• Shows data as soon as the data is updated in the data store

• Ensures that the report and the semantic model only contain data from the current and previous

year

• Ensures that the report respects any table-level security specified in the source data store

• Minimizes the execution time of report queries

Quiz

Overview

Litware. Inc. is a manufacturing company that has offices throughout North America. The analytics

team at Litware contains data engineers, analytics engineers, data analysts, and data scientists.

Existing Environment

litware has been using a Microsoft Power Bl tenant for three years. Litware has NOT enabled any

Fabric capacities and features.

Fabric Environment

Litware has data that must be analyzed as shown in the following table.

The Product data contains a single table and the following columns.

The customer satisfaction data contains the following tables:

• Survey

• Question

• Response

For each survey submitted, the following occurs:

• One row is added to the Survey table.

• One row is added to the Response table for each question in the survey.

The Question table contains the text of each survey question. The third question in each survey

response is an overall satisfaction score. Customers can submit a survey after each purchase.

User Problems

The analytics team has large volumes of data, some of which is semi-structured. The team wants to

use Fabric to create a new data store.

Product data is often classified into three pricing groups: high, medium, and low. This logic is

implemented in several databases and semantic models, but the logic does NOT always match across

implementations.

Planned Changes

Litware plans to enable Fabric features in the existing tenant. The analytics team will create a new

data store as a proof of concept (PoC). The remaining Litware users will only get access to the Fabric

features once the PoC is complete. The PoC will be completed by using a Fabric trial capacity.

The following three workspaces will be created:

• AnalyticsPOC: Will contain the data store, semantic models, reports, pipelines, dataflows, and

notebooks used to populate the data store

• DataEngPOC: Will contain all the pipelines, dataflows, and notebooks used to populate Onelake

• DataSciPOC: Will contain all the notebooks and reports created by the data scientists

The following will be created in the AnalyticsPOC workspace:

• A data store (type to be decided)

• A custom semantic model

• A default semantic model

• Interactive reports

The data engineers will create data pipelines to load data to OneLake either hourly or daily

depending on the data source. The analytics engineers will create processes to ingest transform, and

load the data to the data store in the AnalyticsPOC workspace daily. Whenever possible, the data

engineers will use low-code tools for data ingestion. The choice of which data cleansing and

transformation tools to use will be at the data engineers' discretion.

All the semantic models and reports in the Analytics POC workspace will use the data store as the

sole data source.

Technical Requirements

The data store must support the following:

• Read access by using T-SQL or Python

• Semi-structured and unstructured data

• Row-level security (RLS) for users executing T-SQL queries

Files loaded by the data engineers to OneLake will be stored in the Parquet format and will meet

Delta Lake specifications.

Data will be loaded without transformation in one area of the AnalyticsPOC data store. The data will

then be cleansed, merged, and transformed into a dimensional model.

The data load process must ensure that the raw and cleansed data is updated completely before

populating the dimensional model.

The dimensional model must contain a date dimension. There is no existing data source for the date

dimension. The Litware fiscal year matches the calendar year. The date dimension must always

contain dates from 2010 through the end of the current year.

The product pricing group logic must be maintained by the analytics engineers in a single location.

The pricing group data must be made available in the data store for T-SQL queries and in the default

semantic model. The following logic must be used:

• List prices that are less than or equal to 50 are in the low pricing group.

• List prices that are greater than 50 and less than or equal to 1,000 are in the medium pricing

group.

• List pnces that are greater than 1,000 are in the high pricing group.

Security Requirements

Only Fabric administrators and the analytics team must be able to see the Fabric items created as

part of the PoC. Litware identifies the following security requirements for the Fabric items in the

AnalyticsPOC workspace:

• Fabric administrators will be the workspace administrators.

• The data engineers must be able to read from and write to the data store. No access must be

granted to datasets or reports.

• The analytics engineers must be able to read from, write to, and create schemas in the data store.

They also must be able to create and share semantic models with the data analysts and view and

modify all reports in the workspace.

• The data scientists must be able to read from the data store, but not write to it. They will access

the data by using a Spark notebook.

• The data analysts must have read access to only the dimensional model objects in the data store.

They also must have access to create Power Bl reports by using the semantic models created by the

analytics engineers.

• The date dimension must be available to all users of the data store.

• The principle of least privilege must be followed.

Both the default and custom semantic models must include only tables or views from the

dimensional model in the data store. Litware already has the following Microsoft Entra security

groups:

• FabricAdmins: Fabric administrators

• AnalyticsTeam: All the members of the analytics team

• DataAnalysts: The data analysts on the analytics team

• DataScientists: The data scientists on the analytics team

• Data Engineers: The data engineers on the analytics team

• Analytics Engineers: The analytics engineers on the analytics team

Report Requirements

The data analysis must create a customer satisfaction report that meets the following requirements:

• Enables a user to select a product to filter customer survey responses to only those who have

purchased that product

• Displays the average overall satisfaction score of all the surveys submitted during the last 12

months up to a selected date

• Shows data as soon as the data is updated in the data store

• Ensures that the report and the semantic model only contain data from the current and previous

year

• Ensures that the report respects any table-level security specified in the source data store

• Minimizes the execution time of report queries

Which two actions should you recommend performing from the Fabric Admin portal? Each correct

answer presents part of the solution.

NOTE: Each correct answer is worth one point.

Quiz

Overview

Litware. Inc. is a manufacturing company that has offices throughout North America. The analytics

team at Litware contains data engineers, analytics engineers, data analysts, and data scientists.

Existing Environment

litware has been using a Microsoft Power Bl tenant for three years. Litware has NOT enabled any

Fabric capacities and features.

Fabric Environment

Litware has data that must be analyzed as shown in the following table.

The Product data contains a single table and the following columns.

The customer satisfaction data contains the following tables:

• Survey

• Question

• Response

For each survey submitted, the following occurs:

• One row is added to the Survey table.

• One row is added to the Response table for each question in the survey.

The Question table contains the text of each survey question. The third question in each survey

response is an overall satisfaction score. Customers can submit a survey after each purchase.

User Problems

The analytics team has large volumes of data, some of which is semi-structured. The team wants to

use Fabric to create a new data store.

Product data is often classified into three pricing groups: high, medium, and low. This logic is

implemented in several databases and semantic models, but the logic does NOT always match across

implementations.

Planned Changes

Litware plans to enable Fabric features in the existing tenant. The analytics team will create a new

data store as a proof of concept (PoC). The remaining Litware users will only get access to the Fabric

features once the PoC is complete. The PoC will be completed by using a Fabric trial capacity.

The following three workspaces will be created:

• AnalyticsPOC: Will contain the data store, semantic models, reports, pipelines, dataflows, and

notebooks used to populate the data store

• DataEngPOC: Will contain all the pipelines, dataflows, and notebooks used to populate Onelake

• DataSciPOC: Will contain all the notebooks and reports created by the data scientists

The following will be created in the AnalyticsPOC workspace:

• A data store (type to be decided)

• A custom semantic model

• A default semantic model

• Interactive reports

The data engineers will create data pipelines to load data to OneLake either hourly or daily

depending on the data source. The analytics engineers will create processes to ingest transform, and

load the data to the data store in the AnalyticsPOC workspace daily. Whenever possible, the data

engineers will use low-code tools for data ingestion. The choice of which data cleansing and

transformation tools to use will be at the data engineers' discretion.

All the semantic models and reports in the Analytics POC workspace will use the data store as the

sole data source.

Technical Requirements

The data store must support the following:

• Read access by using T-SQL or Python

• Semi-structured and unstructured data

• Row-level security (RLS) for users executing T-SQL queries

Files loaded by the data engineers to OneLake will be stored in the Parquet format and will meet

Delta Lake specifications.

Data will be loaded without transformation in one area of the AnalyticsPOC data store. The data will

then be cleansed, merged, and transformed into a dimensional model.

The data load process must ensure that the raw and cleansed data is updated completely before

populating the dimensional model.

The dimensional model must contain a date dimension. There is no existing data source for the date

dimension. The Litware fiscal year matches the calendar year. The date dimension must always

contain dates from 2010 through the end of the current year.

The product pricing group logic must be maintained by the analytics engineers in a single location.

The pricing group data must be made available in the data store for T-SQL queries and in the default

semantic model. The following logic must be used:

• List prices that are less than or equal to 50 are in the low pricing group.

• List prices that are greater than 50 and less than or equal to 1,000 are in the medium pricing

group.

• List pnces that are greater than 1,000 are in the high pricing group.

Security Requirements

Only Fabric administrators and the analytics team must be able to see the Fabric items created as

part of the PoC. Litware identifies the following security requirements for the Fabric items in the

AnalyticsPOC workspace:

• Fabric administrators will be the workspace administrators.

• The data engineers must be able to read from and write to the data store. No access must be

granted to datasets or reports.

• The analytics engineers must be able to read from, write to, and create schemas in the data store.

They also must be able to create and share semantic models with the data analysts and view and

modify all reports in the workspace.

• The data scientists must be able to read from the data store, but not write to it. They will access

the data by using a Spark notebook.

• The data analysts must have read access to only the dimensional model objects in the data store.

They also must have access to create Power Bl reports by using the semantic models created by the

analytics engineers.

• The date dimension must be available to all users of the data store.

• The principle of least privilege must be followed.

Both the default and custom semantic models must include only tables or views from the

dimensional model in the data store. Litware already has the following Microsoft Entra security

groups:

• FabricAdmins: Fabric administrators

• AnalyticsTeam: All the members of the analytics team

• DataAnalysts: The data analysts on the analytics team

• DataScientists: The data scientists on the analytics team

• Data Engineers: The data engineers on the analytics team

• Analytics Engineers: The analytics engineers on the analytics team

Report Requirements

The data analysis must create a customer satisfaction report that meets the following requirements:

• Enables a user to select a product to filter customer survey responses to only those who have

purchased that product

• Displays the average overall satisfaction score of all the surveys submitted during the last 12

months up to a selected date

• Shows data as soon as the data is updated in the data store

• Ensures that the report and the semantic model only contain data from the current and previous

year

• Ensures that the report respects any table-level security specified in the source data store

• Minimizes the execution time of report queries

appropriate sequence. The solution must meet the technical requirements.

What should you do?

Quiz

Overview

Litware. Inc. is a manufacturing company that has offices throughout North America. The analytics

team at Litware contains data engineers, analytics engineers, data analysts, and data scientists.

Existing Environment

litware has been using a Microsoft Power Bl tenant for three years. Litware has NOT enabled any

Fabric capacities and features.

Fabric Environment

Litware has data that must be analyzed as shown in the following table.

The Product data contains a single table and the following columns.

The customer satisfaction data contains the following tables:

• Survey

• Question

• Response

For each survey submitted, the following occurs:

• One row is added to the Survey table.

• One row is added to the Response table for each question in the survey.

The Question table contains the text of each survey question. The third question in each survey

response is an overall satisfaction score. Customers can submit a survey after each purchase.

User Problems

The analytics team has large volumes of data, some of which is semi-structured. The team wants to

use Fabric to create a new data store.

Product data is often classified into three pricing groups: high, medium, and low. This logic is

implemented in several databases and semantic models, but the logic does NOT always match across

implementations.

Planned Changes

Litware plans to enable Fabric features in the existing tenant. The analytics team will create a new

data store as a proof of concept (PoC). The remaining Litware users will only get access to the Fabric

features once the PoC is complete. The PoC will be completed by using a Fabric trial capacity.

The following three workspaces will be created:

• AnalyticsPOC: Will contain the data store, semantic models, reports, pipelines, dataflows, and

notebooks used to populate the data store

• DataEngPOC: Will contain all the pipelines, dataflows, and notebooks used to populate Onelake

• DataSciPOC: Will contain all the notebooks and reports created by the data scientists

The following will be created in the AnalyticsPOC workspace:

• A data store (type to be decided)

• A custom semantic model

• A default semantic model

• Interactive reports

The data engineers will create data pipelines to load data to OneLake either hourly or daily

depending on the data source. The analytics engineers will create processes to ingest transform, and

load the data to the data store in the AnalyticsPOC workspace daily. Whenever possible, the data

engineers will use low-code tools for data ingestion. The choice of which data cleansing and

transformation tools to use will be at the data engineers' discretion.

All the semantic models and reports in the Analytics POC workspace will use the data store as the

sole data source.

Technical Requirements

The data store must support the following:

• Read access by using T-SQL or Python

• Semi-structured and unstructured data

• Row-level security (RLS) for users executing T-SQL queries

Files loaded by the data engineers to OneLake will be stored in the Parquet format and will meet

Delta Lake specifications.

Data will be loaded without transformation in one area of the AnalyticsPOC data store. The data will

then be cleansed, merged, and transformed into a dimensional model.

The data load process must ensure that the raw and cleansed data is updated completely before

populating the dimensional model.

The dimensional model must contain a date dimension. There is no existing data source for the date

dimension. The Litware fiscal year matches the calendar year. The date dimension must always

contain dates from 2010 through the end of the current year.

The product pricing group logic must be maintained by the analytics engineers in a single location.

The pricing group data must be made available in the data store for T-SQL queries and in the default

semantic model. The following logic must be used:

• List prices that are less than or equal to 50 are in the low pricing group.

• List prices that are greater than 50 and less than or equal to 1,000 are in the medium pricing

group.

• List pnces that are greater than 1,000 are in the high pricing group.

Security Requirements

Only Fabric administrators and the analytics team must be able to see the Fabric items created as

part of the PoC. Litware identifies the following security requirements for the Fabric items in the

AnalyticsPOC workspace:

• Fabric administrators will be the workspace administrators.

• The data engineers must be able to read from and write to the data store. No access must be

granted to datasets or reports.

• The analytics engineers must be able to read from, write to, and create schemas in the data store.

They also must be able to create and share semantic models with the data analysts and view and

modify all reports in the workspace.

• The data scientists must be able to read from the data store, but not write to it. They will access

the data by using a Spark notebook.

• The data analysts must have read access to only the dimensional model objects in the data store.

They also must have access to create Power Bl reports by using the semantic models created by the

analytics engineers.

• The date dimension must be available to all users of the data store.

• The principle of least privilege must be followed.

Both the default and custom semantic models must include only tables or views from the

dimensional model in the data store. Litware already has the following Microsoft Entra security

groups:

• FabricAdmins: Fabric administrators

• AnalyticsTeam: All the members of the analytics team

• DataAnalysts: The data analysts on the analytics team

• DataScientists: The data scientists on the analytics team

• Data Engineers: The data engineers on the analytics team

• Analytics Engineers: The analytics engineers on the analytics team

Report Requirements

The data analysis must create a customer satisfaction report that meets the following requirements:

• Enables a user to select a product to filter customer survey responses to only those who have

purchased that product

• Displays the average overall satisfaction score of all the surveys submitted during the last 12

months up to a selected date

• Shows data as soon as the data is updated in the data store

• Ensures that the report and the semantic model only contain data from the current and previous

year

• Ensures that the report respects any table-level security specified in the source data store

• Minimizes the execution time of report queries

requirements.

What are two ways to achieve the goal? Each correct answer presents a complete solution.

NOTE: Each correct selection is worth one point.

Quiz

Overview

Litware. Inc. is a manufacturing company that has offices throughout North America. The analytics

team at Litware contains data engineers, analytics engineers, data analysts, and data scientists.

Existing Environment

litware has been using a Microsoft Power Bl tenant for three years. Litware has NOT enabled any

Fabric capacities and features.

Fabric Environment

Litware has data that must be analyzed as shown in the following table.

The Product data contains a single table and the following columns.

The customer satisfaction data contains the following tables:

• Survey

• Question

• Response

For each survey submitted, the following occurs:

• One row is added to the Survey table.

• One row is added to the Response table for each question in the survey.

The Question table contains the text of each survey question. The third question in each survey

response is an overall satisfaction score. Customers can submit a survey after each purchase.

User Problems

The analytics team has large volumes of data, some of which is semi-structured. The team wants to

use Fabric to create a new data store.

Product data is often classified into three pricing groups: high, medium, and low. This logic is

implemented in several databases and semantic models, but the logic does NOT always match across

implementations.

Planned Changes

Litware plans to enable Fabric features in the existing tenant. The analytics team will create a new

data store as a proof of concept (PoC). The remaining Litware users will only get access to the Fabric

features once the PoC is complete. The PoC will be completed by using a Fabric trial capacity.

The following three workspaces will be created:

• AnalyticsPOC: Will contain the data store, semantic models, reports, pipelines, dataflows, and

notebooks used to populate the data store

• DataEngPOC: Will contain all the pipelines, dataflows, and notebooks used to populate Onelake

• DataSciPOC: Will contain all the notebooks and reports created by the data scientists

The following will be created in the AnalyticsPOC workspace:

• A data store (type to be decided)

• A custom semantic model

• A default semantic model

• Interactive reports

The data engineers will create data pipelines to load data to OneLake either hourly or daily

depending on the data source. The analytics engineers will create processes to ingest transform, and

load the data to the data store in the AnalyticsPOC workspace daily. Whenever possible, the data

engineers will use low-code tools for data ingestion. The choice of which data cleansing and

transformation tools to use will be at the data engineers' discretion.

All the semantic models and reports in the Analytics POC workspace will use the data store as the

sole data source.

Technical Requirements

The data store must support the following:

• Read access by using T-SQL or Python

• Semi-structured and unstructured data

• Row-level security (RLS) for users executing T-SQL queries

Files loaded by the data engineers to OneLake will be stored in the Parquet format and will meet

Delta Lake specifications.

Data will be loaded without transformation in one area of the AnalyticsPOC data store. The data will

then be cleansed, merged, and transformed into a dimensional model.

The data load process must ensure that the raw and cleansed data is updated completely before

populating the dimensional model.

The dimensional model must contain a date dimension. There is no existing data source for the date

dimension. The Litware fiscal year matches the calendar year. The date dimension must always

contain dates from 2010 through the end of the current year.

The product pricing group logic must be maintained by the analytics engineers in a single location.

The pricing group data must be made available in the data store for T-SQL queries and in the default

semantic model. The following logic must be used:

• List prices that are less than or equal to 50 are in the low pricing group.

• List prices that are greater than 50 and less than or equal to 1,000 are in the medium pricing

group.

• List pnces that are greater than 1,000 are in the high pricing group.

Security Requirements

Only Fabric administrators and the analytics team must be able to see the Fabric items created as

part of the PoC. Litware identifies the following security requirements for the Fabric items in the

AnalyticsPOC workspace:

• Fabric administrators will be the workspace administrators.

• The data engineers must be able to read from and write to the data store. No access must be

granted to datasets or reports.

• The analytics engineers must be able to read from, write to, and create schemas in the data store.

They also must be able to create and share semantic models with the data analysts and view and

modify all reports in the workspace.

• The data scientists must be able to read from the data store, but not write to it. They will access

the data by using a Spark notebook.

• The data analysts must have read access to only the dimensional model objects in the data store.

They also must have access to create Power Bl reports by using the semantic models created by the

analytics engineers.

• The date dimension must be available to all users of the data store.

• The principle of least privilege must be followed.

Both the default and custom semantic models must include only tables or views from the

dimensional model in the data store. Litware already has the following Microsoft Entra security

groups:

• FabricAdmins: Fabric administrators

• AnalyticsTeam: All the members of the analytics team

• DataAnalysts: The data analysts on the analytics team

• DataScientists: The data scientists on the analytics team

• Data Engineers: The data engineers on the analytics team

• Analytics Engineers: The analytics engineers on the analytics team

Report Requirements

The data analysis must create a customer satisfaction report that meets the following requirements:

• Enables a user to select a product to filter customer survey responses to only those who have

purchased that product

• Displays the average overall satisfaction score of all the surveys submitted during the last 12

months up to a selected date

• Shows data as soon as the data is updated in the data store

• Ensures that the report and the semantic model only contain data from the current and previous

year

• Ensures that the report respects any table-level security specified in the source data store

• Minimizes the execution time of report queries

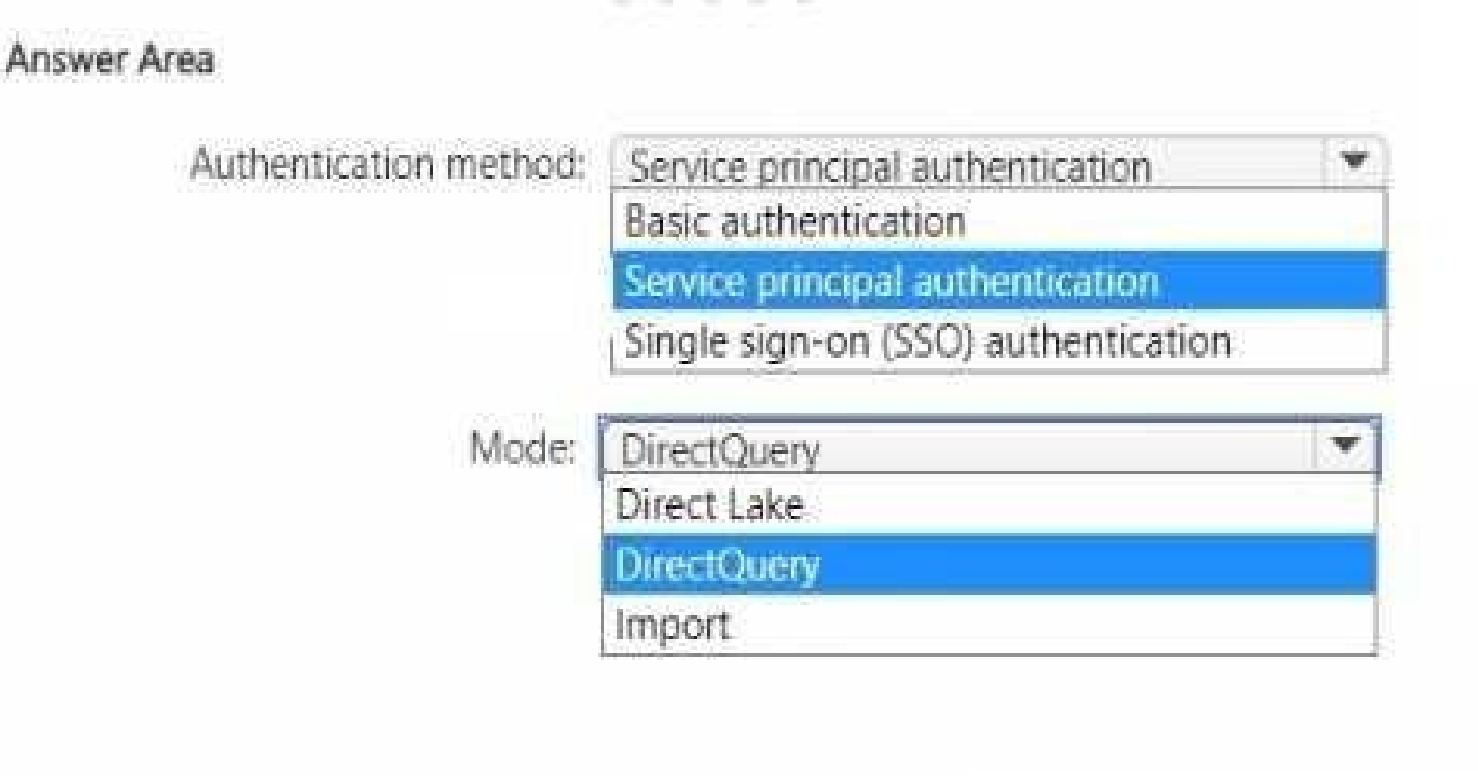

Which data source authentication method and mode should you use? To answer, select the

appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

For the semantic model design required for the customer satisfaction report, the choices for data

source authentication method and mode should be made based on security and performance

considerations as per the case study provided.

Authentication method: The data should be accessed securely, and given that row-level security

(RLS) is required for users executing T-SQL queries, you should use an authentication method that

supports RLS. Service principal authentication is suitable for automated and secure access to the

data, especially when the access needs to be controlled programmatically and is not tied to a specific

user's credentials.

Mode: The report needs to show data as soon as it is updated in the data store, and it should only

contain data from the current and previous year. DirectQuery mode allows for real-time reporting

without importing data into the model, thus meeting the need for up-to-date dat

a. It also allows for RLS to be implemented and enforced at the data source level, providing the

necessary security measures.

Based on these considerations, the selections should be:

Authentication method: Service principal authentication

Mode: DirectQuery

Quiz

Overview

Contoso, ltd. is a US-based health supplements company, Contoso has two divisions named Sales and

Research. The Sales division contains two departments named Online Sales and Retail Sales. The

Research division assigns internally developed product lines to individual teams of researchers and

analysts.

Identity Environment

Contoso has a Microsoft Entra tenant named contoso.com. The tenant contains two groups named

ResearchReviewersGroupi and ReseachReviewefsGfoup2.

Data Environment

Contoso has the following data environment

• The Sales division uses a Microsoft Power B1 Premium capacity.

• The semantic model of the Online Sales department includes a fact table named Orders that uses

import mode. In the system of origin, the OrderlD value represents the sequence in which orders are

created.

• The Research department uses an on-premises. third-party data warehousing product.

• Fabric is enabled for contoso.com.

• An Azure Data Lake Storage Gen2 storage account named storage1 contains Research division data

for a product line named Producthne1. The data is in the delta format.

• A Data Lake Storage Gen2 storage account named storage2 contains Research division data for a

product line named Productline2. The data is in the CSV format.

Planned Changes

Contoso plans to make the following changes:

• Enable support for Fabric in the Power Bl Premium capacity used by the Sales division.

• Make all the data for the Sales division and the Research division available in Fabric.

• For the Research division, create two Fabric workspaces named Producttmelws and

Productline2ws.

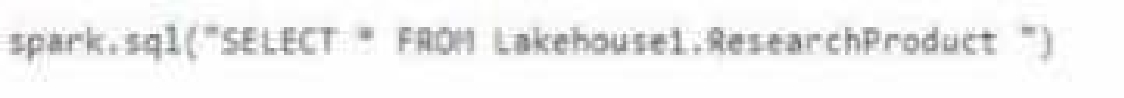

• in Productlinelws. create a lakehouse named LakehouseV

• In Lakehouse1. create a shortcut to storage1 named ResearchProduct.

Data Analytics Requirements

Contoso identifies the following data analytics requirements:

• All the workspaces for the Sales division and the Research division must support all Fabric

experiences.

• The Research division workspaces must use a dedicated, on-demand capacity that has per-minute

billing.

• The Research division workspaces must be grouped together logically to support OneLake data

hub filtering based on the department name.

• For the Research division workspaces, the members of ResearchRevtewersGroupl must be able to

read lakehouse and warehouse data and shortcuts by using SQL endpoints.

• For the Research division workspaces, the members of ResearchReviewersGroup2 must be able to

read lakehouse data by using Lakehouse explorer.

• All the semantic models and reports for the Research division must use version control that

supports branching

Data Preparation Requirements

Contoso identifies the following data preparation requirements:

• The Research division data for Producthne2 must be retrieved from Lakehouset by using Fabric

notebooks.

• All the Research division data in the lakehouses must be presented as managed tables in

Lakehouse explorer.

Semantic Model Requirements

Contoso identifies the following requirements for implementing and managing semantic models;

• The number of rows added to the Orders table during refreshes must be minimized.

• The semantic models in the Research division workspaces must use Direct Lake mode.

General Requirements

Contoso identifies the following high-level requirements that must be considered for all solutions:

• Follow the principle of least privilege when applicable

• Minimize implementation and maintenance effort when possible.

A)

B)

C)

D)

Microsoft Implementing Analytics Solutions Using Microsoft Fabric Practice test unlocks all online simulator questions

Thank you for choosing the free version of the Microsoft Implementing Analytics Solutions Using Microsoft Fabric practice test! Further deepen your knowledge on Microsoft Simulator; by unlocking the full version of our Microsoft Implementing Analytics Solutions Using Microsoft Fabric Simulator you will be able to take tests with over 140 constantly updated questions and easily pass your exam. 98% of people pass the exam in the first attempt after preparing with our 140 questions.

BUY NOWWhat to expect from our Microsoft Implementing Analytics Solutions Using Microsoft Fabric practice tests and how to prepare for any exam?

The Microsoft Implementing Analytics Solutions Using Microsoft Fabric Simulator Practice Tests are part of the Microsoft Database and are the best way to prepare for any Microsoft Implementing Analytics Solutions Using Microsoft Fabric exam. The Microsoft Implementing Analytics Solutions Using Microsoft Fabric practice tests consist of 140 questions and are written by experts to help you and prepare you to pass the exam on the first attempt. The Microsoft Implementing Analytics Solutions Using Microsoft Fabric database includes questions from previous and other exams, which means you will be able to practice simulating past and future questions. Preparation with Microsoft Implementing Analytics Solutions Using Microsoft Fabric Simulator will also give you an idea of the time it will take to complete each section of the Microsoft Implementing Analytics Solutions Using Microsoft Fabric practice test . It is important to note that the Microsoft Implementing Analytics Solutions Using Microsoft Fabric Simulator does not replace the classic Microsoft Implementing Analytics Solutions Using Microsoft Fabric study guides; however, the Simulator provides valuable insights into what to expect and how much work needs to be done to prepare for the Microsoft Implementing Analytics Solutions Using Microsoft Fabric exam.

BUY NOWMicrosoft Implementing Analytics Solutions Using Microsoft Fabric Practice test therefore represents an excellent tool to prepare for the actual exam together with our Microsoft practice test . Our Microsoft Implementing Analytics Solutions Using Microsoft Fabric Simulator will help you assess your level of preparation and understand your strengths and weaknesses. Below you can read all the quizzes you will find in our Microsoft Implementing Analytics Solutions Using Microsoft Fabric Simulator and how our unique Microsoft Implementing Analytics Solutions Using Microsoft Fabric Database made up of real questions:

Info quiz:

- Quiz name:Microsoft Implementing Analytics Solutions Using Microsoft Fabric

- Total number of questions:140

- Number of questions for the test:50

- Pass score:80%

You can prepare for the Microsoft Implementing Analytics Solutions Using Microsoft Fabric exams with our mobile app. It is very easy to use and even works offline in case of network failure, with all the functions you need to study and practice with our Microsoft Implementing Analytics Solutions Using Microsoft Fabric Simulator.

Use our Mobile App, available for both Android and iOS devices, with our Microsoft Implementing Analytics Solutions Using Microsoft Fabric Simulator . You can use it anywhere and always remember that our mobile app is free and available on all stores.

Our Mobile App contains all Microsoft Implementing Analytics Solutions Using Microsoft Fabric practice tests which consist of 140 questions and also provide study material to pass the final Microsoft Implementing Analytics Solutions Using Microsoft Fabric exam with guaranteed success. Our Microsoft Implementing Analytics Solutions Using Microsoft Fabric database contain hundreds of questions and Microsoft Tests related to Microsoft Implementing Analytics Solutions Using Microsoft Fabric Exam. This way you can practice anywhere you want, even offline without the internet.

BUY NOW